A Cut Too Deep

Why Your AI Tasks Keep Failing—And How to Fix Them

The Wrong Scalpel Problem

This week, I was speaking to a group of students, professionals, and leaders when someone asked: "I try to vibe code and do AI dev but feel like I am either tasking it with things that are too big or too small and I can't get the acceleration that I hear about... help."

It's the wrong scalpel problem—but it's also something deeper.

The Surgeon's Wisdom

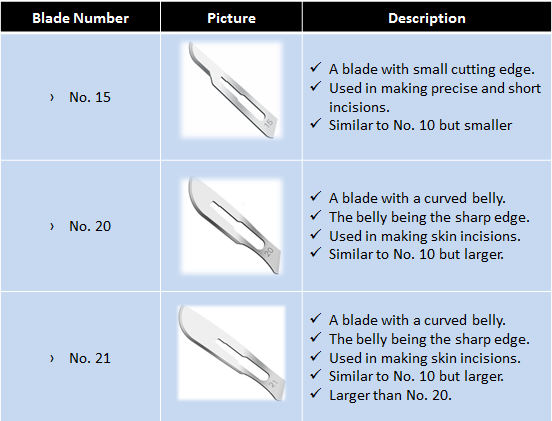

I told them about surgical scalpels. A No. 15 blade makes precise incisions through delicate tissue. A No. 20 cuts deeper. Use the wrong one, and you'll slice through layers you never intended to touch—blood vessels when you meant to cut muscle, or barely scratching the surface when you need to go deep.

But here's the kicker: a skilled surgeon can make any scalpel work. Your skill overrides the weakness of the tooling. The right tool helps, but mastery transcends the tool itself.

When I shared this with my friend Abe, who's chief of minimally invasive and bariatric surgery at Columbia, he pushed it further: "Go to war with the army you have, not the army you wish you had."

He nailed the deeper issue: "We're all still learning what AI needs and can do. You tell it 'A' and expect it to translate into 'x, y, and z.' In some areas it may do that, but in other areas it translates into 'x' and confabulates the gaps."

The Calibration Sweet Spot

The problem isn't the AI—it's our calibration. We give tasks that are miscalibrated for humans and machines all the time. Your mind can be calibrated at the meta or atomic level, and then you can use the tools in a skilled way to enable you.

Here's what miscalibration looks like:

Too big: "Build me a complete customer analytics system with predictive modeling"—the AI flails, hallucinates architecture

Too small: "Write a for-loop"—you're wasting a Formula One car on a grocery run

Just right: "Draft the data model for user analytics" or "Suggest three approaches for real-time updates"—now you're accelerating

The sweet spot is where AI amplifies your judgment without replacing it: strategic thinking, pattern recognition, first drafts that you refine.

The Residency Model

My surgeon friend offered an even better analogy: think of a senior surgeon communicating with residents. How do you translate what's in the senior surgeon's mind to something that meets the resident at their level?

You wouldn't give an intern an aortic arch reconstruction. You wouldn't explain sutures to a fifth-year the way you do to a first-year. You gauge what the resident can and can't do before giving tasks. You adapt as they develop.

The same applies to AI. We expect perfect translation, but like a resident, it might only deliver part of what we envision and fill in the rest with what it thinks is right. The art is in gauging capability, matching instruction to that capability, and creating feedback loops.

This is where Test-Driven Development (writing tests before code) and Behavior-Driven Development (defining expected behavior first) become our surgical checklists—guardrails that ensure quality regardless of who or what is doing the work.

The Needle Paradox

Even acupuncture teaches this lesson. When you first encounter non-hypodermic needles, you discover Chinese needles are substantively thicker while Japanese needles are extremely thin—even marketed as "painless" because of their thin body and microscopically sharper point.

You'd think the thicker needle not called "painless" would hurt more. But that simply isn't the case.

An experienced acupuncturist can make practically any needle—thin or thick, long or short, sharp or not—painless. Tools, levels, practitioners, mindset, guardrails—all critical points of evaluation right now.

The Adaptation Trade-off

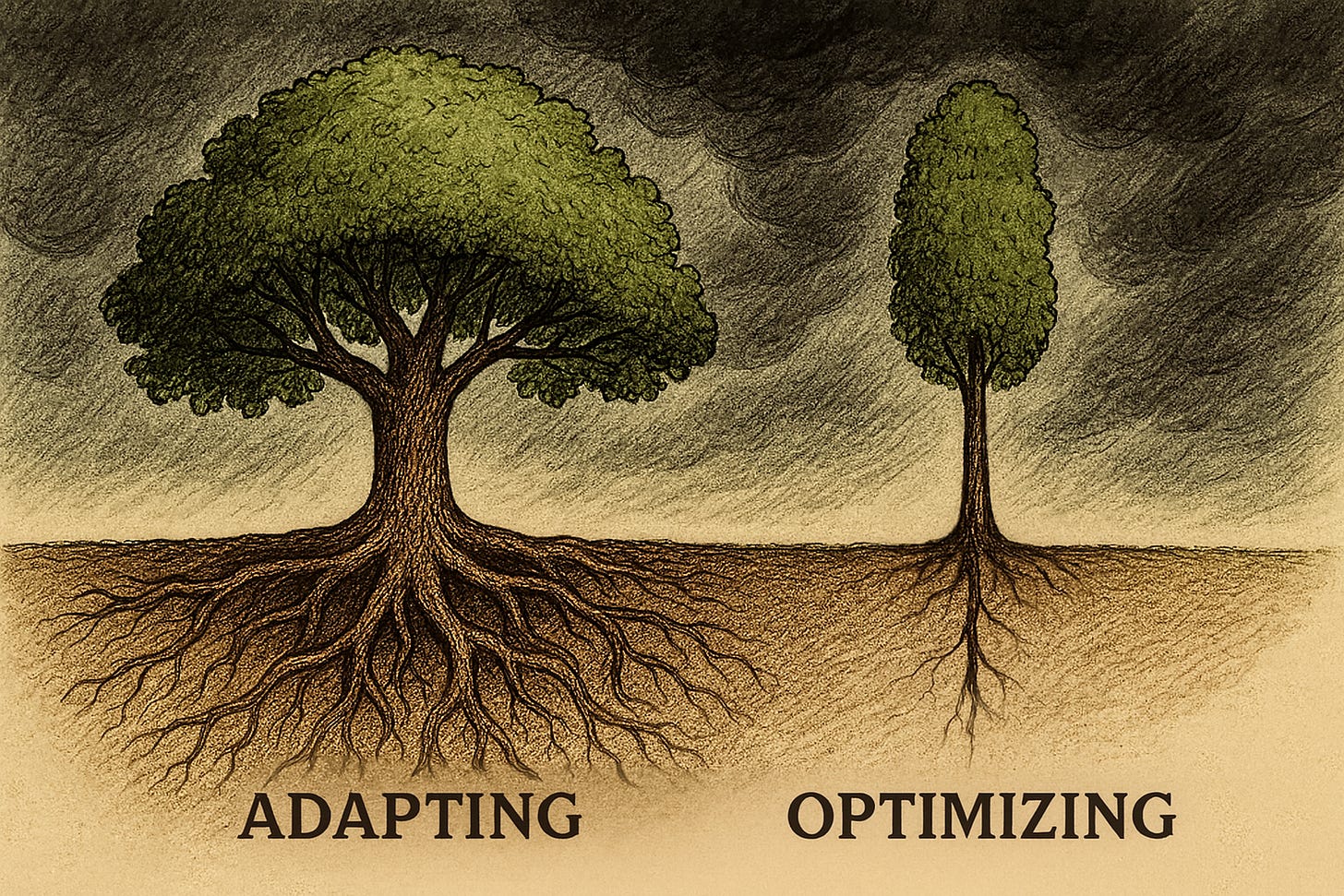

Here's the uncomfortable truth: we've optimized ourselves into fragility.

Think of a child raised speaking multiple languages. They might start speaking later than monolingual peers—the parents worry, the metrics look bad. But that child becomes multilingual, able to navigate worlds others can't access, seeing patterns across linguistic structures that monolingual speakers miss.

We're doing the opposite. We hyperspecialize, maximize for short-term output, hit our quarterly metrics. We produce developers who can only use one framework, surgeons who can only operate with specific tools, students who ace tests but crumble when the rules change.

We're breeding efficiency, not adaptability. We're maximizing today's output while creating tomorrow's obsolescence.

Education's Blind Spot

This exposes the fundamental flaw: we're producing scalpel collectors, not surgeons. We're teaching what to think, not how to think critically.

The education system should, in theory, expose us to a multiplicity of differing opinions, enabling students to evaluate the fundamental tensions between thinkers, authors, scientists, and creatives. From those tensions should come the ability to become great practitioners—knowledge scaffolded on the failures and successes of those before us—and the capacity to create new original knowledge from those fundamental tensions we wrestle with.

Instead, we've optimized for content delivery over capability calibration. We hand out tools without teaching when to use which. We assign tasks without gauging readiness. We reward execution over navigation.

The result? Hyperspecialized experts who dominate in stable conditions but shatter when the world shifts. We're creating the professional equivalent of monoculture crops—high yield until the first unexpected disease wipes out everything.

Jazz Within Boundaries

Abe captured it perfectly: "The ideal riffing partner has some guardrails that make the interaction somewhat predictable but allows riffing, not micromanaging."

Think jazz within chord progressions. Structure enables creativity. Constraints force innovation. The musicians who can play in any band, in any style, adapting on the fly—they might not be the absolute best at classical or bebop, but they'll never be obsolete.

The Real Work

So what requires deeper evaluation, introspection, and innovation right now?

We need to pull back from hyperspecialization. Yes, you might produce less output in the short term when you're learning multiple approaches, wrestling with contradictions, building adaptability. But you'll survive the long term.

Stop fetishizing tools—train practitioners who can make any tool work. Match instruction to capability, whether delegating to humans or machines. Build your feedback loops first. Expose tensions deeply—let learners wrestle with contradictions, not memorize answers.

Most importantly, shift from execution to navigation. From "what to think" to "how to think." From maximizing today's metrics to building tomorrow's adaptability.

Your Next Move

The breakthrough with AI won't come from GPT-5 or Claude 4 or whatever's next. It will come from practitioners who chose adaptability over optimization, who learned to think rather than just execute, who built capability across tools rather than dependency on one.

Ask yourself:

Are you optimizing for today's output or tomorrow's adaptability?

What contradictions are you wrestling with rather than avoiding?

Where are you choosing the discomfort of being multilingual over the comfort of fluency in one language?

The skill education hasn't taught us—thinking critically rather than just executing, building adaptability rather than just efficiency, turning tension into wisdom—is the one AI makes essential.

Master that, and any scalpel will cut true. More importantly, you'll still be cutting when everyone else's tools are obsolete.