I’m Cancelling the Apocalypse

A Leader’s Guide to Lock into the Growth Zone

“tonight is the last night of the world, tomorrow will be a whole new world”

—a friend’s comment to me before we played guitar together for hours, a night in 2005

For leaders navigating AI adoption with courage and responsibility.

Self-learning, self-adapting, forever-remembering humanoid robots. That’s just today. Capabilities are doubling every six months. Competitive releases arrive weekly. Every task we attempt with AI becomes super-intelligent—better than human performance—in less than 24 months. To make matters more exciting, this is just the status now. Computing power, energy efficiency, and form factors will accelerate past Moore’s Law, even before we see the inevitable wave of self-improving AIs—which we will.

What’s the price of the apocalypse?

About $20/month for ChatGPT. Maybe $200 if you spring for Pro. Add Claude and Gemini to the tab—purely for sport.

That’s it. The singularity now fits in your coffee budget, auto-renewing between Netflix and Spotify.

So now that we have that out of the way, let’s talk about going from surviving to thriving.

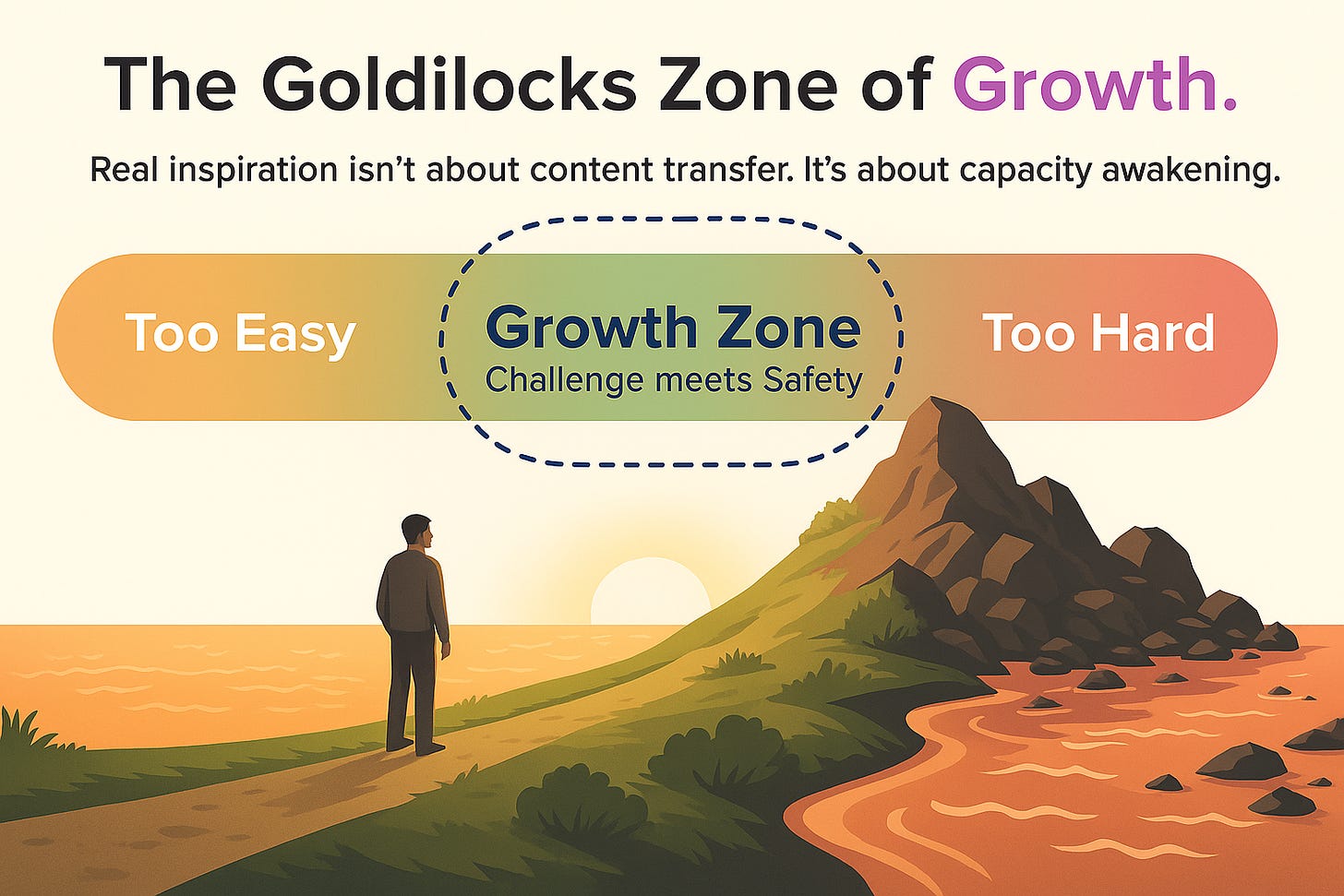

Thriving happens in the Growth Zone—the sweet spot between too easy and too hard. Too easy, and you drift into irrelevance. Too hard, and you freeze in place. The Growth Zone is where challenge meets safety, and it’s where the best leaders keep themselves and their teams.

The Gravity Center of Fear

Stray too far to the “too hard” side of the Growth Zone, and you land in the fear industry—where an entire market exists to sell you paralysis dressed as wisdom. Books with titles like Superintelligence: Paths, Dangers, Strategies. Bestselling books about our “final invention.” TED talks with millions of views, all selling the same product: paralysis.

FUD—Fear, Uncertainty, Doubt—isn’t new. IBM perfected it in the 1970s: “Nobody gets fired for buying IBM” was FUD in a suit and tie.

Today’s AI FUD is more sophisticated. It starts with valid risks—job displacement, decision opacity, power concentration—and amplifies them into extinction narratives. Cave et al. (2020) found that exposure to dystopian AI narratives significantly increased reported concern and lowered support for beneficial AI applications.1

The formula is predictable:

Take a real trend.

Extend it to infinity.

Remove human agency.

Declare inevitability.

As Lewandowsky et al. (2022) point out, the most effective antidote is to replace vague warnings with a clear, factual alternative explanation that fills the gap left by the misinformation.

Stanford’s Human-Centered AI Institute (2024) found such narratives reduce support for beneficial AI research by 32% and cut AI-related enrollments by 18%. In trying to prevent “the end,” we’re failing to build the beginning.

The GPT-5 Threshold

AI has crossed a threshold—not because of a single release, but because we’ve moved from treating it as a tool to treating it as a collaborator. Today’s systems can remember strategic priorities from months ago, connect insights across domains, and apply your own values-based frameworks in decision-making. Others will soon match and surpass these capabilities, but the real shift isn’t in the tech—it’s in leadership.

The question is no longer “Can AI do this?” but “What do we want to do now that it can?” Leaders who stay in the Growth Zone use that question to guide their teams—balancing challenge with safety, vision with pragmatism—while others get pulled into extremes of fear or blind optimism. That shift in mindset changes everything, including how you lead.

Clockwork or Jazz

For decades, leadership ran on a clockwork model: fixed inputs, fixed outputs, precision as the highest virtue. The new era is more like jazz—steady rhythm, adaptive timing, and co-creation.

If AI is your bandmate, your role isn’t to script every note but to set the tempo, keep the group in the Growth Zone, and improvise when the music changes. That’s how leaders turn powerful technology into a human-centered performance, not just a technical exercise.

Three Postures in the Apocalypse

Most leaders drift toward one of three postures—and only one keeps you in the Growth Zone:

Prophets of Doom – “Too Hard” thinkers: accurate on speed, wrong on meaning.

Ostriches – “Too Easy” operators: manage the future like it’s the past, brittle when the ground shifts.

Weavers – Growth Zone leaders: integrate AI into human-centered systems without surrendering authorship.

Microsoft’s 2025 Work Trend Index shows frontier firms already reporting productivity gains of over 50%, and developer studies (Peng et al., 2023) confirm AI pair-programming can cut task time in half. Shorter innovation cycles, higher trust from stakeholders.

A Different Ending

When I say I’m cancelling the apocalypse, I’m rejecting the notion that the arrival of future AIs is a foregone disaster. I’ve seen what paralysis costs: lost opportunities, wasted talent, diminished human potential.

The end of the world is not inevitable. The end of outdated leadership habits is.

A Framework for Leading Past FUD

Staying in the Growth Zone isn’t an accident—it’s a practice. Here’s how to build the habits that keep fear from overwhelming you and comfort from dulling you.

1. Anchor to a Clear Mission - Every AI decision must connect to a mission you can say out loud without slides.

2. Make Agency Visible - Define what stays human, and why. Review quarterly.

3. Use Specifics, Not Slogans - “Our AI reduced documentation by 30%, giving nurses two extra hours with patients” persuades. “AI will revolutionize healthcare” does not.

4. Measure Human Impact Alongside Output - Track productivity and human flourishing—engagement, trust, retention.

5. Build Adaptive Loops - No plan survives unchanged. Build the capacity for mid-course correction before crises demand it.

Cancelling the apocalypse isn’t an act of optimism—it’s an act of leadership. It’s the refusal to surrender authorship of the future.

Your Leadership Moment

The apocalypse story sells because it’s easy. Leadership is harder. It asks you to face both risk and opportunity, and to choose authorship over drift.

When the future is written, you won’t be judged on whether you deployed an AI before your peers. You’ll be judged on whether you used it to build systems that are more human than the ones it replaced.

The tools are here. The tide is moving. The apocalypse is optional.

References

Cave, S., Dihal, K., & Dillon, S. (2020). Scary robots: Examining public responses to AI. AI & Society, 35(2), 331–340.

Stanford HAI. (2024). Public perception and AI progress: The hidden costs of catastrophic narratives. Stanford Human-Centered AI Institute.

Lewandowsky, S., Cook, J., Ecker, U., & van der Linden, S. (2022). The debunking handbook 2022. Climate Change Communication Research Hub.

Microsoft. (2025). Work trend index 2025: AI at work is here, now comes the hard part. Microsoft Research.

Peng, S., Kalliamvakou, E., Cihon, P., & Demirer, M. (2023). The impact of AI on developer productivity: Evidence from GitHub Copilot. arXiv preprint arXiv:2302.06590.

In their UK survey, concern levels for certain dystopian narratives ranged from 38% to 51% — substantially higher than for positive narratives like Ease (13%).

Incredible!