The Beautiful Flaw

Why our imperfections are features, not bugs, in the age of perfect machines

So it seems that AIs are reaching towards a perfection that is interesting, frightening, and unachievable by humans wrapped into one present soon to come to humanity. For arguments sake, let’s call soon less than 10 years.

Self-driving cars already achieve 10x fewer accidents per million miles than human drivers[^1]. Medical AI diagnosticians now reach diagnostic accuracy of 85.7% in complex cases where physicians average 20% - 4x better than doctors[^2]. AI tutors demonstrate learning gains equivalent to two years of traditional instruction in just six weeks[^3]. These are a substantial improvements in the current way of life, nearing what we can call perfect-by-design for purposes of our chat.

Given that the above has rapidly transitioned from very imperfect to near-perfect technology and processes, what is our response or reaction as humans to these environmental stimuli? Do we wrestle up all the courage we can to just "be more perfect" or "strive for perfection" or be depressed and anxious that we can't achieve it or approach achieving it? Do we allow the weight of the super performing machines and super performing people crush and collapse our fragile egos and have that id or super ego (for my Freudian friends) jump in to put the remaining dirt on us and slap a headstone saying "here lies the idiot that couldn't make it"?

For years I've been wondering about what imperfection-by-design would look like - before AI but not before existing automations and tech and social pressures. I even think I did reasonably well calculating how to navigate some, but not all, of life's weirdness and complexity with the tools I was given to achieve that balance of getting on A on a test and making my path my own - and then pop goes the weasel - out comes your children. When you try to guide your children on a journey that you’re still in the middle of, things get awkward. You want them to get that A in class, but you want them to be uniquely themselves. Sometimes there is overlap there, and many times not. So how do you decide? Have I succeeded? failed? Unclear, and there isn't even a meaningful or reliable benchmark to measure it.

As a parent, you also come to realize that no matter how typical or atypical you or your children are, every school has problems, no grocery store has everything you need, every friend you or your children have brings their own quirks that make them "fun" or "interesting" (those quotes doing heavy lifting), and no work problem is truly ever fully solvable. So in the world of unsolvable things, is it possible to make the experience fun-solvable? A start for reflecting the imperfection-by-design mantra and ethos required to check-in with, wrestle with, or just plain acknowledge the deep and unchartable beauty of our uniqueness via these imperfections? Can each of those 70 facets of our personal diamond be part of those imperfections... or worse yet, can those imperfections BE our perfection?

The Jewel in the Crown

There's a 300-year-old Hasidic story, attributed to the Baal Shem Tov, about a great king whose crown held the rarest jewel in the world—flawless, luminous, impossibly perfect. One day, it cracked. The king was devastated. This wasn't just a stone—it was the symbolic heart of the kingdom. He summoned every expert from every land, offering riches to anyone who could repair it without diminishing its beauty.

They all failed. Some tried to fill the crack. Others tried to polish it out. But every attempt only made it worse. The crack remained—a stark reminder of what was lost. Finally, one quiet jeweler approached with a strange request: permission to engrave the jewel. The court balked. Engrave the most precious gem alive? But the king, tired and grieving, agreed.

What the jeweler did next changed everything. He didn't hide the crack. He traced it—delicately, precisely—and from its line carved the stem of a blooming rose. The flaw became the flower. And the king wept—not because the jewel had been restored, but because it had been transformed.

So I sit here and wonder if there is a way to manufacture this new type of skill, or tolerance, or ability that can enable us to lean into that which is unknowable and unachievable by design.

The Japanese Paradox: When Perfect and Imperfect Dance

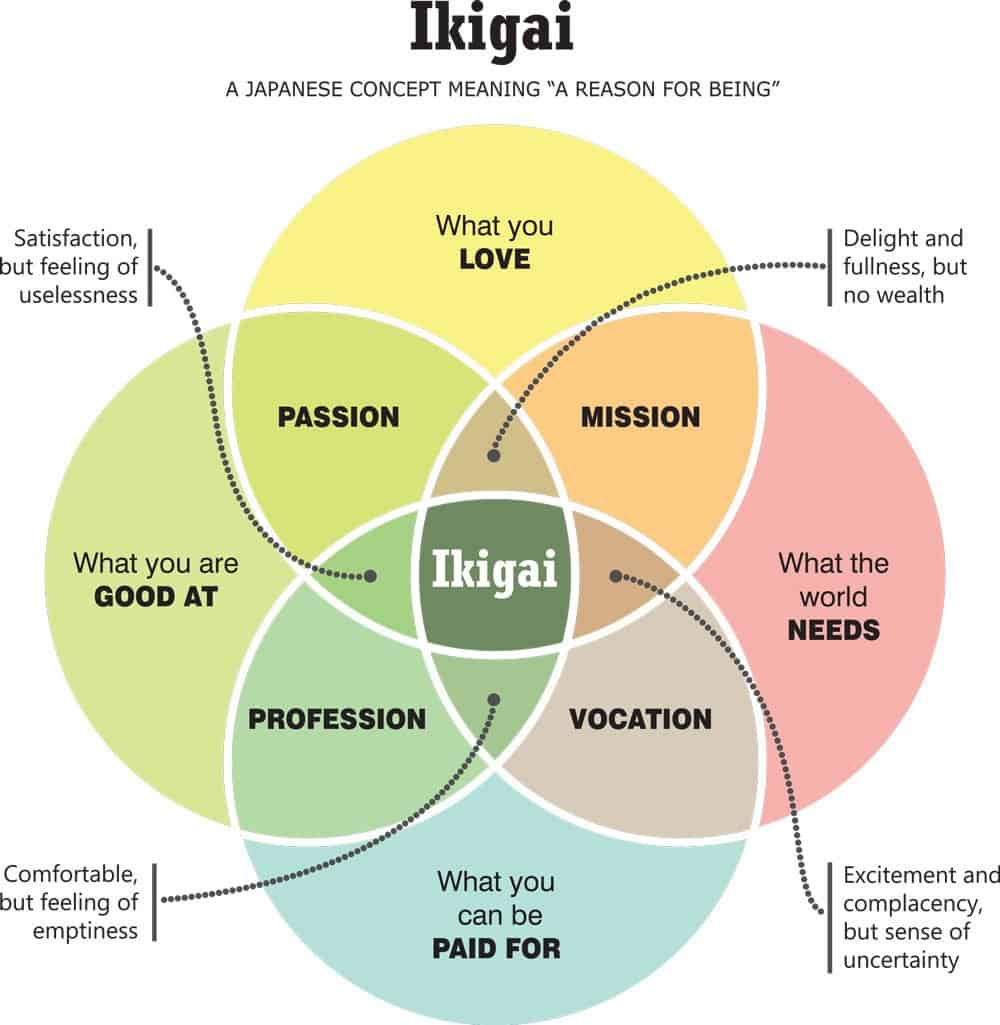

Japanese culture holds two seemingly contradictory concepts in elegant tension. There's ikigai – the pursuit of perfect alignment between what you love, what you're good at, what the world needs, and what you can be paid for. It's a framework for finding your perfect purpose, your reason for being.

Then there's wabi-sabi – the art of finding beauty in imperfection, impermanence, and incompleteness. A bowl with a crack, mended with gold. A garden where asymmetry creates harmony. A moment that's beautiful precisely because it will end.

How can a culture celebrate both the pursuit of perfect purpose and the beauty of imperfection?

Maybe that's exactly the point. Maybe they're not opposites but dance partners.

Ikigai gives us direction – the north star of purposeful perfection. Wabi-sabi gives us permission – the grace to be beautifully broken along the way. One without the other creates either brittle perfectionism or aimless acceptance.

What if our response to AI's perfection isn't to compete, but to cultivate both – perfect purpose, imperfect expression?

I saw this play out last week in a product review. Our AI had generated flawless documentation – technically accurate, comprehensively indexed, searchable to the millisecond. But the product lead, a leader who writes like he talks (with plenty of filler words and tangents), had scribbled his own guide on a napkin during lunch. Guess which one the new hires actually used?

The napkin. Every time.

Not because it was better. Because it was human. The coffee stain near the database diagram told them someone real had struggled with this. The crossed-out sections showed the learning path. The doodle in the margin revealed frustration transformed into understanding.

The Variability Matrix

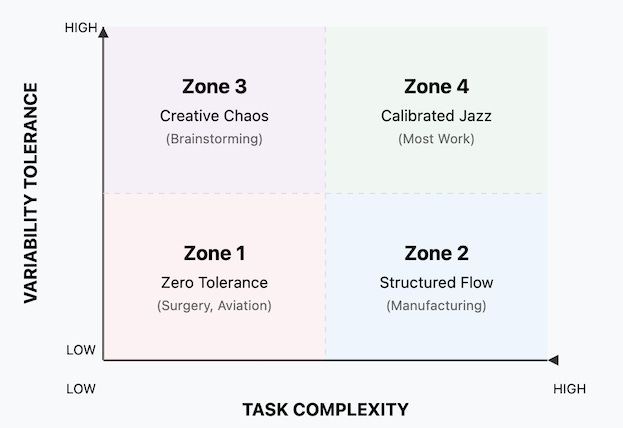

As task complexity rises, the space of valid solutions widens, increasing the payoff to exploratory variance. This creates four distinct zones:

Zone 1: Zero Tolerance (Low Complexity, Low Variability) Surgery. Aviation. Nuclear operations. Here, the WHO reports that standardization and checklists reduce adverse events by 47%[^4]. Medical errors typically result from complex system failures involving human factors, communication breakdowns, and organizational processes rather than isolated human variability. Studies of algorithmic decision-making systems show concerning patterns of over-reliance, with human operators disagreeing with AI recommendations in only 3.2% of cases in some contexts, potentially indicating automation bias rather than effective oversight [^7].

Zone 2: Structured Flow (High Complexity, Low Variability) Manufacturing. Logistics. Accounting. Complex but predictable. Toyota's production system allows only ±2% deviation while maintaining flexibility through standardized variation points. The key is knowing where to be rigid and where to flex.

Zone 3: Creative Chaos (Low Complexity, High Variability) Brainstorming. Early design. Play. Google's "20% time" produced Gmail and AdSense – unstructured exploration increases the quality of the best idea by enabling broader search of the solution space[^5]. Only 1 in 20 napkin sketches survives design review, but the marginal cost of sketching is negligible compared to the value of the breakthrough idea.

Zone 4: Calibrated Jazz (High Complexity, High Variability) Most knowledge work lives here. Customer service that needs both efficiency and empathy. Teaching that requires both consistency and adaptation. Research supports that customer service approaches allowing agent flexibility and autonomy generally outperform rigid scripted interactions, though specific metrics vary by organization and context.

Strategic process variation—allowing controlled deviation from standard procedures—can enhance creativity and adaptability when the benefits of flexibility outweigh the costs of inconsistency.

Designing for Beautiful Failure

I've started experimenting with what I call "beautiful failure points" – places in systems where human imperfection doesn't break things but makes them better. Here's what I've learned:

Build in Wobble Room Like a bridge designed to sway in the wind, systems need flex points. One team added a "confusion chronicle" to their documentation – a running log of every time someone got lost. The AI couldn't generate it. Only humans could populate it. Organizations with strong onboarding processes can improve new hire productivity by up to 70%, particularly when documentation includes common challenges and learning pathways that new employees typically encounter [^8].

Architect Productive Chaos A design firm I work with starts each AI-assisted project with "terrible idea time." Everyone shares their worst solutions. By hand. On paper. No AI assistance. The "Worst Possible Idea" technique, developed by innovation consultant Bryan Mattimore, helps teams overcome creative blocks by deliberately exploring unconventional solutions that can later be refined into viable products [^9].

Measure the Unmeasurable We track everything that can be quantified. But what about tracking surprise? Delight? That moment when someone says "I never thought of it that way"? One team created a moments log – moments when human insight trumped algorithmic answers. Research confirms that effective decision-making requires both algorithmic analysis and human judgment, particularly for strategic foresight and intuitive insights that complement data-driven approaches [^10]. Not everything that matters can be measured, but everything that matters leaves traces.

The Threshold Moments

One of my kids came home from summer internship gig this week, frustrated. His AI assistant had helped them complete every assigned task perfectly. "But I didn't learn anything," he said. "I just executed instructions, and I am not even making own decisions."

So we tried something different. We turned off the AI and tackled a similar problem together. We got several approaches wrong. We argued about methods. We invented our own notation that made no sense but helped us think. By the end, he understood less but learned more.

That's the paradox: Perfect assistance can create imperfect learning.

This connects to what I explored in The Last Skill – when friction disappears, so does growth. But now I'm seeing something deeper. It's not just about preserving friction. It's about designing for beautiful imperfection.

Research on "technostress" shows that over-reliance on perfect digital assistance correlates with decreased problem-solving capability and increased anxiety – the very outcomes we sought to avoid[^6].

Your Imperfection Inventory

As we stand at this threshold, where AI approaches perfection in domain after domain, we need to take inventory not of what we do well, but of how we fail beautifully. Ask yourself:

Where does your inconsistency create connection?

When does your inefficiency enable discovery?

How does your imperfection invite others in?

What breaks when you're too perfect?

How does your wabi-sabi serve your ikigai?

The New Human Advantage

Here's what I'm beginning to understand: Our advantage isn't in doing things machines can't do yet. It's in being what machines can't be ever.

Inconsistent. Surprising. Breakable. Healable. These qualities emerge from lived experience and social trust – something stochastic sampling can mimic but not embody.

A musician friend put it perfectly: "AI can play every note correctly. But music isn't about playing notes correctly. It's about playing them wrong in exactly the right way."

Manufacturing Imperfection

So can we manufacture this skill? Can we build the tolerance for imperfection that lets us thrive alongside perfect machines?

Yes, but not through traditional training. Through practice. Through design. Through choosing, again and again, to build systems that celebrate rather than eliminate our beautiful human inconsistencies.

Start small:

Add "mistakes that taught us" sections to your reports

Create rituals around productive imperfection

Measure surprise alongside success

Build wobble room into workflows (±15% schedule flex in early phases)

Document the journey, not just the destination

Find your ikigai in the imperfect process, not just the perfect outcome

The Beautiful Broken Future

As I write this, my coffee cup has a chip on the rim. My new desk (which as of a month ago used to be a nice but old door) has imperfections throughout - that I admire daily and even run my fingers over to just get a sense of the flow of the bumps, rough patches, and sharp edges. The plant in my window grows asymmetrically toward the light. None of these things are optimal. All of them are real.

In a world of perfect AI, our imperfections aren't weaknesses to overcome. They're the signature of our humanity. The crack where the light gets in. The wobble that proves we're alive.

The question isn't whether we can compete with perfect machines. It's whether we can resist becoming them.

So here's my provocation: What if the highest human achievement in the age of AI isn't perfection, but perfectly calibrated imperfection?

Not sloppiness. Not carelessness. But the deliberate cultivation of beautiful variability. The conscious choice to remain gloriously, productively, connectively human.

The pursuit of ikigai – our perfect purpose – through the practice of wabi-sabi – our imperfect expression.

Because in the end, the machines will achieve perfection.

Our job is to achieve something else entirely.

Our job is to stay beautifully broken.

And maybe, just maybe, that's perfect.

[^1]: Tesla Vehicle Safety Report Q2 2025: Tesla recorded one crash for every 6.69 million miles driven with Autopilot vs. one crash every 702,000 miles for the US average (NHTSA/FHWA 2023 data). Source: https://www.tesla.com/VehicleSafetyReport

[^2]: Microsoft AI Diagnostic Orchestrator (MAI-DxO) correctly diagnosed 85.5% of complex NEJM case studies compared to 20% accuracy for human physicians. Microsoft AI Team. (2025). "The Path to Medical Superintelligence." https://microsoft.ai/new/the-path-to-medical-superintelligence/

[^3]: World Bank pilot program in Edo State, Nigeria achieved 0.3 standard deviation improvement in learning outcomes—equivalent to nearly two years of typical schooling in six weeks. De Simone, M. E., et al. (2024). World Bank Education Technology Study. https://www.worldbank.org/

[^4]: Haynes, A. B., et al. (2009). "A Surgical Safety Checklist to Reduce Morbidity and Mortality in a Global Population." New England Journal of Medicine, 360(5), 491-499. The WHO checklist reduced mortality by 47% from 1.5% to 0.8%.

[^5]: Gmail and AdSense were developed during Google's 20% time policy, with AdSense now accounting for approximately 25% of Google's annual revenue. Sources: Brin, S. & Page, L. (2004). Google Founders' IPO Letter; Qz.com analysis of Google's 20% time policy.

[^6]: Tarafdar, M., Tu, Q., & Ragu-Nathan, T. S. (2010). "Impact of Technostress on End-User Satisfaction and Performance." Journal of Management Information Systems, 27(3), 303-334. Studies show technostress from over-reliance on ICT negatively impacts user satisfaction and performance.

[^7]: Saura, J. R., & Aragó, R. (2021). Analysis of algorithmic decision-making in public administration: The RisCanvi case study. Cognitive Research: Principles and Implications.

[^8]: Brandon Hall Group. (2023). "The State of Onboarding Research Report."

[^9]: Mattimore, B. (2012). "Idea Stormers: How to Lead and Inspire Creative Breakthroughs." Jossey-Bass.

[^10]: Cambridge Judge Business School. (2025). "Human brain vs AI: what makes better decisions?" https://www.jbs.cam.ac.uk/2025/human-brain-vs-ai-what-makes-better-decisions/