The Doing Was the Knowing

What we lose when we stop doing the work

Two Kids, Two Questions

My youngest comes to the computer expecting it to know everything. Her expectations are high and her patience is low — she wants the answer, not the journey. So I’ve been teaching her to say something different: “Teach me, not tell me.” She doesn’t have to use those words exactly, because she’s little and the grammar wanders, but the intent is unmistakable. I want her to want the path, not just the destination. I want her to walk it.

My thirteen-year-old said something different to me this past weekend. He was working through a problem — something for school, math or science, I don’t remember which — and he looked at me and said, “I ask the AI questions, then think about what it says.”

I told him the opposite: “Always think before you ask.”

He looked at me like I’d handed him a wrench when he’d asked for a flashlight.

Two kids. Two instincts about where to stand relative to the machine. One is learning to ask for the teaching. The other wants to be informed. Neither wants to be replaced — but they’re negotiating the boundary differently, in real time, at the kitchen table, over homework.

I keep thinking about these two moments. Not as parenting wins or losses — they were neither — but as something larger. Because what my kids were working through, without any framework or jargon to lean on, is the same question every piece of software is trying to answer right now:Where does the human go?

Not whether. Where.

These aren’t just parenting questions. They’re architecture questions. And every system being built or rebuilt this year — every CRM, every workflow engine, every enterprise platform — is making the same negotiation my kids were making over homework. The boundary is moving. The question is who notices, and who gets to choose where it lands. More on this in my intro, Let the Robot Wars Begin!

The Grammar We Inherited

For half a century, almost every application you’ve ever used has been built on four operations: Create, Read, Update, Delete. CRUD. The thing about CRUD that nobody says out loud: it was never really about the software.

CRUD assumes a human on the other side of every operation. A person who decides what to create. Who knows what to search for. Who determines what needs editing and what needs deleting. The software held the nouns — the records, the fields, the data. The human supplied the verbs. Every click was a decision. Every save was a judgment call.

The human was the verb layer.

I don’t think we noticed because it was so total. Like asking a fish to describe water. For fifty years, we sat between what we wanted to accomplish and the data we needed to accomplish it, and we operated. We curated our own inboxes. We reasoned through our own pipelines. We updated our own records. We did the work.

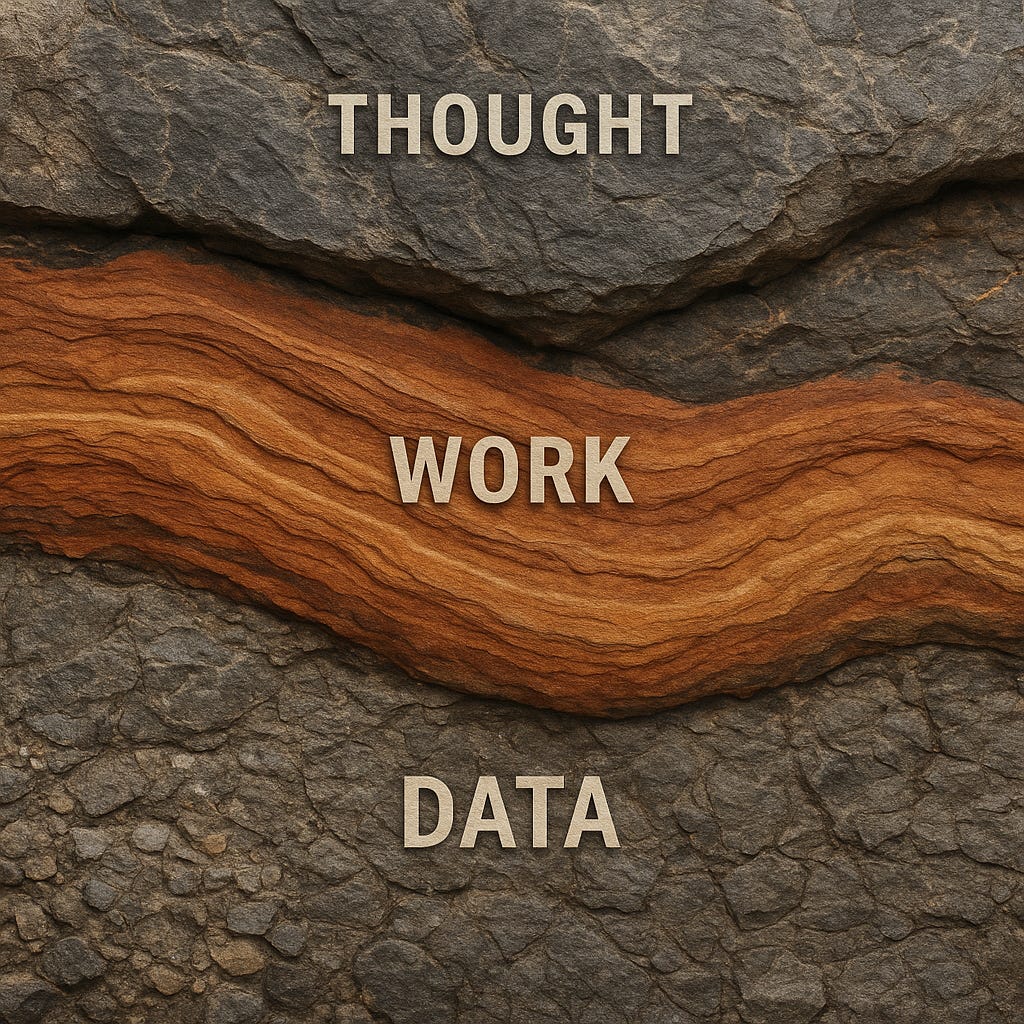

Step back far enough, and the whole arrangement falls into three levels. At the bottom, data: the nouns — records, objects, files, KPIs. This is where Classic CRUD lives, and it isn’t going anywhere. In the middle, operations: the verbs — deciding, sorting, weighing, acting. For fifty years, humans lived here, operating between intent and information. At the top, intent: the goals — relationships, values, purpose. What you were actually trying to accomplish. That was always there, but it was implicit, encoded in the head of whoever was doing the work. Never formalized. Never had to be.

Jens Rasmussen1 saw something similar in the 1980s when he described three levels of cognitive control: skill-based behavior operating on raw signals, rule-based behavior operating on signs, and knowledge-based behavior operating on symbols. Data, operations, intent — by different names, in a different decade, pointing at the same structure.

The structure isn’t new. What’s new — and what I began exploring in Let the Robot Wars Begin! — is that someone else is moving into the middle.

The Assumption Breaks

Consider a salesperson. Not an abstraction — a real one. Someone who manages a pipeline of fifty deals across three territories, who opens their CRM on Monday morning and starts working.

In Classic CRUD, the system holds the deals. The salesperson supplies the intelligence. They mentally sort the pipeline — this one’s real, this one’s stalling, this one died two weeks ago but nobody updated the record. They scan for signals: tone from the last call, budget shifts, a competitor name dropped casually. They build a picture no dashboard captures. They update the fields. They send the follow-up. They close or they move on.

Now reimagine every one of those operations with an agent in the middle.

Curate. The agent decides what’s relevant. It surfaces the three deals that need attention today and deprioritizes the twelve that don’t. The salesperson used to do this — it was the first fifteen minutes of their morning, and it was where intuition lived.

Reason. The agent weighs signals and determines what’s next. It cross-references email sentiment, deal velocity, competitive intelligence, and recommends an approach. The salesperson used to hold that context — loosely, imperfectly, but theirs. Now the synthesis happens before they see the screen.

Update. This is the one that tricks you. In Classic CRUD, Update is a discrete event — a human changes a field, one value becomes another. In Agentic CRUD, Update is continuous. The system is always re-weighting, always adjusting confidence scores and priority rankings. The pipeline looks different this morning and you don’t know why. The agent re-assessed overnight, and the world shifted while you slept. Same word. Different reality.

Do. Classic CRUD never needed this. It was inward-facing — operations on data, inside the system’s walls. But agents face outward. They send the email. They book the meeting. They trigger the workflow. Do is the operation that crosses the boundary between record and reality, and it’s the one Classic CRUD never contemplated — because the human was always the one who crossed it.

Parasuraman, Sheridan, and Wickens2 identified these same four classes of automation back in 2000: information acquisition, information analysis, decision selection, and action implementation. Curate, Reason, Update, Do — by different names, in a research paper most practitioners never read.

The acronym stays. The letters stay. What changes is everything inside them. Data operations become decision operations.3 And the human — the salesperson who used to be the verb layer — finds themselves a floor up, in a place they’ve never had to articulate before.

That displacement isn’t unique. It looks different depending on where you sit. If you’re a designer, it means: design for goals, not actions. Your interface isn’t a place where humans perform operations anymore — it’s a place where humans articulate intent and evaluate outcomes. If you’re a developer, it means: the contracts you’re building aren’t just about what data goes where — they’re about what the system is for. If you’re a manager, it means: your team’s job is defining what good looks like. Clearly. Explicitly. Repeatedly. Because agents will execute against whatever definition you give them, and if you’ve never had to formalize your intent before, you’re about to discover how much of it was tacit — living in hallway conversations and tribal knowledge and the gut feelings of people who’ve done the work long enough to stop explaining why.

The humans are moving up. It’s not as simple as it sounds.

The Paradox

Moving up sounds like a promotion. It isn’t. Or rather, it is, and it’s also something more complicated than that.

The verbs are where competence lives. The salesperson who manually worked their pipeline didn’t just manage deals — they knew their deals. The curating built intuition. The reasoning built judgment. The updating built awareness. The doing built confidence. Each verb was a repetition, and each repetition was a lesson, and the accumulation of those lessons over months and years is what we call expertise.

The doing was the knowing.

Strip the verbs from a professional and you don’t get a strategist. You get someone staring at a dashboard they can no longer read — not because the data is hidden, but because the understanding was never in the data. It was in the handling of the data. The touching, sorting, weighing, second-guessing, correcting. The friction was the teacher.

The efficiency that moves humans out of the operations also removes the feedback loop that built their capacity to operate. Promote someone out of the verbs and you cut off the very practice that taught them how to set goals.4

We’ve seen it before. Pilots who can’t hand-fly after years of autopilot. Doctors whose diagnostic instincts dull when algorithms pre-screen. Navigators who can’t read a map because GPS made the reading unnecessary. Parasuraman and Manzey5 named two failure modes: complacency — poorer detection of malfunctions when systems appear reliable — and automation bias — acting on incorrect recommendations because you’ve stopped checking. Endsley6 documented the mechanism: situation awareness — perception, comprehension, projection — all three degrade when operators are removed from direct control. You can’t maintain awareness of a process you no longer perform. The awareness wasn’t a separate skill. It was a byproduct of the doing.

My kids see it. That’s what strikes me.

My youngest, who comes to the machine expecting answers — she’s negotiating the same boundary as that salesperson. That’s why I keep teaching her to say “teach me, not tell me.” She needs the handling. She needs the friction. The path is the point, even when she’d rather skip it.

“Always think before you ask” is a father saying: don’t outsource reasoning. The order matters. If you reason first, the agent’s answer sharpens your thinking. If you ask first, the agent’s answer becomes your thinking. Same tool. Different sequence. Entirely different outcome.

The risk isn’t that agents get it wrong. The risk is that agents get it right — often enough, long enough — that humans stop participating. And then, on the day the agent gets it wrong, no one in the room has the competence to notice.

Where We Stand

I’m not going to give you a checklist. Checklists are tools for doing. And the doing is exactly what’s changing. What I can offer is a set of orientations.

If you build systems, build them so the human can see the verbs. Surface the Curate — show what was filtered and why. Surface the Reason — show the weights and trade-offs. Make the Update visible — what changed, when, and on whose authority. Let the Do be revocable — because action in the world is not the same as action on a record, and the undo button matters more when the agent is the one pressing send.

If you manage teams, your new job is legibility of intent. The more clearly your organization can articulate what it’s trying to accomplish — not in KPIs, but in purpose — the better agents will serve it. And the more clearly your people can see the verbs still available to them, the less likely they are to atrophy in the gap between goal and outcome.

If you’re a practitioner — the salesperson, the designer, the analyst — protect your verbs. Keep your hands in the work. Not all of it. Not the parts that are genuinely mechanical. But the parts that teach you something. The curating that builds intuition. The reasoning that sharpens judgment. You will not get those back by reading the agent’s summary. Brynjolfsson and McAfee7 were right: the productive path is racing with the machine, not against it. But racing with it means you have to keep running. The moment you sit down, you’re a passenger.

This is part of what we’re building with Amplifier — an open-source agent framework where the kernel provides the data structures and everything about how agents curate, reason, update, and act lives in replaceable modules at the edges. This isn’t theory. People are building it.

But the question — the one my kids were negotiating at the kitchen table — isn’t ultimately about software. It’s about identity. Who are you when the verbs that defined your expertise belong to something else? What remains when the doing is done for you?

I think the answer lives at the top. Not as a retreat — as a responsibility. Intent isn’t just what you want to accomplish. It’s what you believe matters, and why, and for whom. That was always there. We just never had to be articulate about it, because we were too busy doing the work to stop and say what the work was for.

I watched my son hesitate before pressing enter.

And the question — the one that matters, the one he’s already living — isn’t whether to use the machine.

Where do the humans stand?

Further Reading

Let the Robot Wars Begin! — The 2026 opening: compiled intent, agency erosion, and who owns the verbs.

The Flip — On the perceptual shift that happens when you start working with AI instead of on it, and why the reorientation changes everything downstream.

Total Transformation — On dragonflies, metamorphosis, and the question of what kind of change AI is actually asking of us.

Flawed. Unfixable. And Unbreakable. — On course correction as a superpower, and why the capacity to recover matters more than the ability to avoid failure.

Jens Rasmussen's framework of cognitive control describes three levels that map remarkably well to the data/operations/intent structure: skill-based behavior operates on raw signals, rule-based behavior operates on signs, and knowledge-based behavior operates on symbols. Rasmussen, J. (1983). "Skills, Rules, and Knowledge; Signals, Signs, and Symbols, and Other Distinctions in Human Performance Models." IEEE Transactions on Systems, Man, and Cybernetics, SMC-13(3), 257–266.

Parasuraman, Sheridan, and Wickens identified four classes of automation that align with the agentic CRUD operations: information acquisition (Curate), information analysis (Reason), decision selection (Update), and action implementation (Do). Parasuraman, R., Sheridan, T. B., & Wickens, C. D. (2000). "A Model for Types and Levels of Human Interaction with Automation." IEEE Transactions on Systems, Man, and Cybernetics — Part A: Systems and Humans, 30(3), 286–297.

This phrase — data operations become decision operations — captures the shift more precisely than any framework diagram. Classic CRUD operated on data: creating records, reading fields, updating values, deleting rows. Agentic CRUD operates through data toward decisions: curating what matters, reasoning about what it means, continuously updating assessments, and acting on conclusions. The difference is directional. CRUD pointed inward, at the database. Agentic CRUD points outward, at the world. The data is still there — it has to be — but it’s no longer the object of the work. It’s the substrate.

This is the agency paradox — a term worth naming even if the essay doesn't wear it on its sleeve. The paradox is structural, not psychological: the same optimization that elevates humans to the intent level simultaneously degrades their ability to operate there, because intent was never learned abstractly. It was learned through the verbs. Every framework for "human-in-the-loop" design must contend with this: keeping the human in the loop is not just a safety measure. It is the mechanism by which the human remains capable of being in the loop at all.

Parasuraman and Manzey documented two failure modes that emerge when humans are displaced from operations: automation complacency and automation bias. Parasuraman, R. & Manzey, D. (2010). "Complacency and Bias in Human Use of Automation: An Attentional Integration." Human Factors, 52(3), 381–410.

Mica Endsley's theory of situation awareness identifies three levels — perception, comprehension, and projection — all of which degrade when operators are removed from direct control of the systems they oversee. Endsley, M. R. (1995). "Toward a Theory of Situation Awareness in Dynamic Systems." Human Factors, 37(1), 32–64.

Brynjolfsson and McAfee argue that the productive response to increasingly capable machines is not to compete against them but to race alongside them. Brynjolfsson, E. & McAfee, A. (2014). The Second Machine Age: Work, Progress, and Prosperity in a Time of Brilliant Technologies. W.W. Norton.]

Michael, this is a rich piece that I had put in my week-end 'to-do' list; "the doing was the knowing" is a beautiful line. And you have named something important: that the verbs are not merely tasks but the mechanism through which competence forms. I want to affirm that and then push on it.

You propose three layers: data, operations, intent. But as I re-read your piece, I started thinking: we have always had those three layers. Organizations have long distributed them across roles and ranks. Some people collect and curate data. Others do the operational work, the sorting, weighing, reasoning, executing. Others sit at the level of strategy and intent. The hierarchy is not new. What is new is that the middle layer is thinning.

Ans here is where I want to press you, my friend. You write as though the displacement of the doers opens a path for more people to move into the intent layer. But in my experience, the people who operated well at the intent layer were never working in isolation from the doing. They depended on the operational layer not just for execution but for signal. The doers surfaced the anomalies, the frictions, the patterns that did not fit the plan. Strategy was not formulated in a vacuum and then handed down. It was refined continuously by what the doing revealed.

So if an agent now does the doing, and does it at the speed you rightly hint at, what feeds the thinkers? How do the people at the intent layer retain the capacity to parse what matters and to formulate new objectives, rather than merely presiding over churn? The river is flowing, but standing on its banks does not make you responsible for where it goes. Speed without interpretive friction does not produce better intent. It produces the illusion of control.

This connects to your opening, which moved me. Your children (and my children) are negotiating the boundary in real time, at the kitchen table. And that is precisely what worries me most. We are asking our children to develop judgment about when to delegate and when to engage, in a paradigm that we ourselves have not yet mapped. You are teaching your kids well. But who is designing the broader system? Who is incentivizing the leaders in education, in policy, in technology to sit together and say: we do not yet know what the new formation looks like, but we must build it anyway, and we must build it with the developmental needs of children at the center, not as an afterthought?

We cannot train children for a world we have not yet described. But we can protect the conditions under which they develop the capacity to navigate it: time, friction, encounter, the slow work of earning understanding rather than receiving it. That, to me, is the deeper challenge.

Thank you for writing it. I look forward to where you take this next.

ps: Linkedin cut off my note, so I decided to write a longer comment here.