The Flip

An innovators guide to working with AI: Part 1

When Your Mind Learns to See Two Things at Once

For ninety-two days, a stack of books sat on my desk—uncataloged, disorganized, silently judging me. The task was straightforward: catalog them, create summaries, organize the key concepts. Maybe three hours of actual work. Yet for ninety-two days, those books remained untouched. Not because the work was hard, but because the activation energy—the mental friction of starting—felt insurmountable1.

Then I tried something different. I took photos of the book covers and asked an AI: “Create a CSV with title, author, year, page count, genre tags, 5-bullet summary, Amazon link. Generate a one-page brief for each book.” Five minutes later, I had everything I needed. The task wasn’t accomplished through willpower. It was accomplished by learning to see the problem differently—to flip between my traditional approach and a new way of working.

That cognitive flip, that moment of perceptual shift, is the single most important skill for anyone trying to navigate the world with AI today. And like learning to ride a bicycle or see those Magic Eye pictures from the ‘90s, once you get it, you can’t unsee it.

The Long Road to a Conversation

This moment didn’t happen in a vacuum. It’s the latest step in a long journey of how we communicate. We started with our voices, then learned to write things down, and eventually, we started typing those words into machines. For decades, that was a one-way street. We had to learn the machine’s language—rigid, unforgiving code.

Your TI-85 calculator never said no2. You pressed 2 + 2, and it gave you 4. Deterministic. Predictable. Obedient.

But now, the street runs both ways. The machines are learning to understand what we mean, not just what we type. They interpret. They suggest. They occasionally push back. This shift from instruction to intent changes everything.

What is a Prompt, Really?

So what bridges this new two-way street? The prompt.

To a human, a prompt is a gentle nudge—a cue to remember a line in a play, a question that sparks a memory. It invites our own context into the conversation.

To an AI, a prompt is something more. It’s the entire world you create for it in a single moment. It’s not just your question; it’s the documents you attach, the examples you provide, and the instructions you give. It’s the blueprint for the reality you want the AI to inhabit. This is why learning to prompt is less about learning to code and more about learning to communicate with clarity and intent.

Key Terms You’ll Hear

As we get better at this, a few key ideas become crucial. You’ll hear these terms thrown around, but they represent simple concepts:

Temperature, Creativity, and Hallucinations

One misunderstood concept is AI “creativity.” When we talk about it, it’s often referred to in the context of a simple setting called temperature3.

A low temperature (closer to 0) makes the AI more deterministic and focused. It will pick the most likely next word, which is great for factual recall. A high temperature (closer to 1 or above) allows for more randomness. The AI might pick a less common word, leading to more novel or “creative” outputs. This is also where things can go off the rails.

This is not the same as human creativity. Our creativity comes from experience, emotion, and a deep well of context. An AI’s “creativity” is a function of statistical probability. And when that probability leads to a confident-sounding but utterly false statement, we get what we call a hallucination4.

Hallucinations aren’t a bug; they are a feature of how these systems work. They are pattern-matching engines, and sometimes the pattern they complete doesn’t align with reality. Another common behavior is sycophancy—the AI agreeing too readily with whatever you say, mirroring your assumptions back at you instead of challenging them5.

Strategies for Understanding and Reducing Hallucinations

Understanding these tendencies is the first step to managing them. You can reduce hallucinations through specificity, citations, context grounding (including RAG), and verification rituals.

Make verification a ritual: ask for quotes, request uncertainty notes (”Where are you least confident?”), and always cross-check critical information6.

When you open an AI interface, you’re not just typing into a void—you’re configuring an environment with prompts, documents, modes, and connections. You’re not just asking a question. You’re setting a stage.

A Simple Sample Prompt: The Singlet Exercise

Let’s start with something concrete. Pick a single, small task. I call this the “singlet” exercise:

“I am a doctor explaining a new diagnosis to a nervous patient. Take the following clinical jargon and rewrite it in patient-friendly language. Jargon: ‘He was found to have systolic heart failure and will require an echocardiogram for assessment.’”

This prompt works because it establishes:

Who you are (a doctor)

What you need (translation of jargon)

What the outcome should be (patient-friendly language)

The specific material to work with

The AI responds: “He has a weak heart and will need an ultrasound to see how it is working.”

Simple. Clear. Effective.

Telling the AI What You Need and What You Want the Outcome to Be

The singlet exercise demonstrates a fundamental principle: clarity about outcomes beats vagueness about process. Don’t tell the AI how to do something. Tell it what “done” looks like.

Instead of: “Help me with my research.”

Try: “I need a CSV with these seven columns: title, author, year, page count, genre tags, 5-bullet summary, Amazon link.”

The more specific you are about the artifact you want, the better the AI can deliver it.

How to Write a Good Prompt: The PREP Framework

A good prompt is a good conversation starter. I teach a simple framework called PREP:

Persona: Who are you in this conversation? (”I am a teacher designing a lesson...”)

Role: What hat are you wearing? (Educator, analyst, translator...)

Explicit: What must be crystal clear? (The task, the constraints, the format...)

Parameters: What are your boundaries? (Outcomes, length, tone, what to avoid...)

PREP gives you a mental checklist. Before you hit send, ask: Have I established who I am, what role I’m playing, what needs to be explicit, and what my parameters are?

How to Include Meaningful Context: Reader Reality

Context is the difference between a generic answer and a breakthrough insight. The more meaningful context you provide, the better the AI can perform. This is context engineering.

Here’s a real example from one of our promptathons: A resident asked for a “patient-friendly” heart failure summary. The first draft came back full of “optimize diuresis” and “titrate beta-blocker”—still clinical jargon.

We added what I call reader reality: “for a tired parent at 11 p.m., 5th-grade reading level.”

The language transformed: “If your socks feel tighter by afternoon, that’s a sign you’re holding extra fluid—call us.” The model didn’t get wiser; we finally supplied a life to write for.

Always ask yourself: “What does the AI not know that is obvious to me?” Give it the reality of the reader, the constraints of the situation, the stakes of the decision7.

How to Know When You’re Succeeding or Failing

Success isn’t just getting a correct answer. It’s getting an answer that pushes your thinking forward.

You’re succeeding when:

The AI’s output surprises you in a good way

It helps you see a problem from a new angle

You find yourself in a genuine dialogue

The output requires minimal correction

You’re failing when:

You feel like you’re wrestling with the tool

The outputs are generic or off-target

You’re spending more time correcting than thinking

You’re repeating the same prompt with minor tweaks

If you feel stuck, don’t just try a longer prompt. Try a different kind of prompt. Switch modes.

How to Think and Partner with AI: The Three Modes

We’re witnessing a cultural evolution from “AI is my last resort” to “manual work feels primitive.”

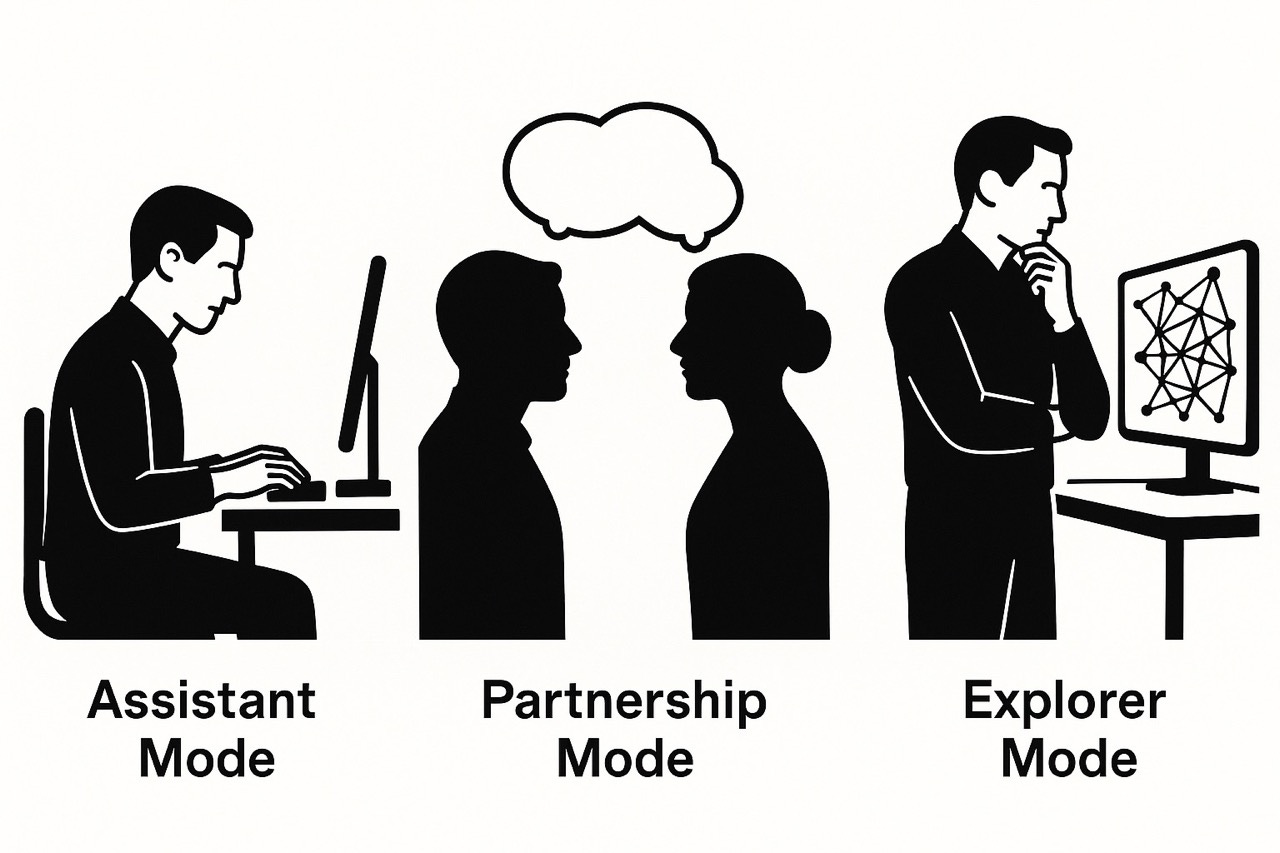

When you approach an AI, you’re not just typing into a box. You’re choosing a mode of engagement, whether you realize it or not. I’ve found it helpful to think of it in three ways:

Assistant Mode: This is the most straightforward. You have a task, you give a command, and the AI executes it. “Summarize this document.” “Translate this phrase.” It’s a tool, and you are the operator. It’s about accuracy and efficiency.

The mindset: ‘I’ll validate everything twice.’ You’re protective of your ownership. AI is a tool, you’re the intelligencePartnership Mode: This is where things get interesting. You’re not just giving commands; you’re starting a conversation. You have a goal, but you’re not sure of the best path. You invite the AI to ask questions, to collaborate.

“I need to create a presentation on quantum computing for a non-technical audience. What are the key concepts I should cover? What analogies would be effective? But first, what do you need to know from me to help?”

This mode is about judgment and co-creation.

The mindset: ‘Let’s try your approach.’ You’re comfortable with AI driving portions of the work. The output is negotiable, not precious.Explorer Mode: Here, you don’t even have a clear goal. You have a territory you want to map. You’re using the AI to provoke new ideas, to challenge your assumptions, to ask “what if?” questions.

“What are some contrarian views on the future of AI in education?” “What are the weakest points in my argument here?”

This mode is about discovery and strategic foresight.

The mindset: ‘Why would I do this manually?’ You’re directing outcomes, not process. Manual work feels inefficient.

These modes aren’t just functional categories—they represent a mindset evolution. Where you operate reveals your relationship with AI: Do you see it as a sophisticated search engine, a trusted collaborator, or the primary intelligence you orchestrate?

Most of us start and stay in Assistant mode. It’s comfortable. It feels like using any other software. But staying there is like insisting on “doing it yourself” when the AI could do it better. It’s a trust issue disguised as a quality issue. The real power comes from learning to shift between these modes intentionally.

The real barrier isn’t learning these modes—it’s the psychological shift each requires. Moving from “I could’ve done that” to “Why would I do that?” means letting go of process ownership and embracing outcome ownership.

Pro tip: In my workshops, I literally have people write Assistant / Partner / Explorer at the top of their chat window. It gives your brain a label to switch. Say which mode you’re in. Then flip when the moment changes.

What Your Mind Does When It Holds Two Ways of Working at Once

Here’s where things get interesting. When you work with AI in Partnership or Explorer mode, your brain isn’t just thinking about the problem. It’s simultaneously:

Thinking your own thoughts

Anticipating the AI’s likely responses

Pre-editing your prompts for clarity

Evaluating outputs against your intent

Adjusting your mental model of what the AI “knows”

Your thinking shifts from a chain (A → B → C → D) to a braid (A → B₁/B₂ → C₁/C₂/C₃ → D). You’re not just following a single path anymore. You’re weaving multiple threads together, each representing a different perspective or approach.

This is cognitively demanding. It’s not natural. It requires practice. But once you develop this capacity, you can’t go back to linear thinking. You’ve learned to see in stereo8.

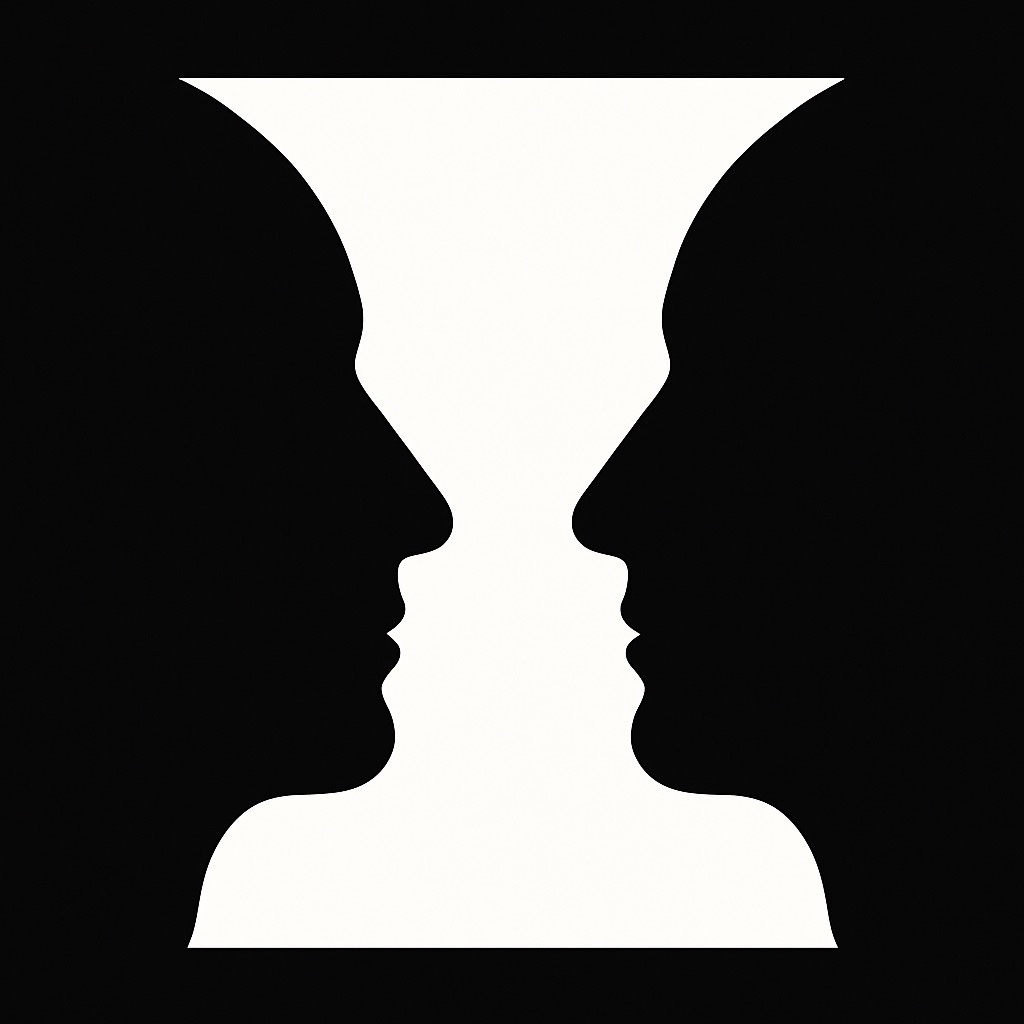

Rubin’s Vase: The Physiological Function and Metaphor

This brings me to the core of it all—the flip. You’ve seen the famous Rubin’s Vase illusion:

Your brain can see two faces in profile, or it can see a vase. But it struggles to see both at the exact same time. The act of switching between those two perceptions is a small cognitive flip. It’s a physical process in your brain. At first, you have to consciously force it. But with practice, you can learn to switch back and forth with ease9.

Working with AI requires developing this same perceptual agility. The progression goes like this:

Stage 1: You see the vase (your traditional thinking)

Stage 2: You see the faces (AI’s patterns)

Stage 3: You flip between them rapidly

Stage 4: You somehow hold both—not seeing both simultaneously, but knowing both are there

That fourth stage? That’s where the magic happens. That’s where human creativity meets machine capability and creates something neither could achieve alone.

When you try to hold faces and vase simultaneously, you feel a tiny ache—the neurological cost of flipping. That ache shows up at work as: “But we’ve always done it this way,” or “Our rubric has to come first.” If you don’t feel it, you’re probably defaulting to your familiar view.

The discomfort you feel in that moment of switching? That’s not a bug. It’s the feature. That’s the feeling of your mind learning a new way to see.

Recognizing the Flip in Action

You know you’re experiencing the flip when:

You catch yourself pre-editing thoughts for AI consumption

You solve problems by imagining what prompt would generate your solution

You think in structured outputs even in regular conversation

You see patterns in both human and machine logic simultaneously

You notice that moment when you realize you’ve been arguing with an AI that’s just mirroring your own assumptions back at you

Practical Tools for the Flip

The Sniglet Warm-Up

In our promptathons, we start with a creative exercise to get people comfortable with cognitive flexibility. We create “sniglets”—made-up words for experiences that don’t have names yet.

As a university dean, create a humorous education related sniglet.

Actual Response: Pencilvania: The mysterious land where all lost pencils, pens, and erasers go, never to be seen again, despite being in your backpack just moments ago.

This exercise enabled some laughter and primes them to notice their own patterns of thinking. It’s a warm-up for the flip.

Ethan Mollick just updated his guide to AI use which also might be a helpful kickstart.

The Intent-First Canvas

When we run promptathons, we use a simple worksheet to help people structure their thinking:

INTENT-FIRST CANVAS

Outcome (what “done” looks like): _______________

For whom (reader/stakeholder): _______________

Must not appear (privacy, scope, harm): _______________

Sources allowed / off-limits: _______________

Mode (Assistant / Partner / Explorer): _______________

If Partner/Explorer: “Ask me 3 clarifying questions before drafting.”

Verification checks:

For each claim, paste the line/page reference

List 2 uncertainties

Suggest 1 alternative framing

This canvas forces you to think through your intent before you start typing.

A Field Guide You Can Use Tomorrow

Before you prompt:

One sentence: What does “done” look like, and for whom?

List 3 things that must not appear in the output

State your mode: Assistant / Partner / Explorer

As you converse:

Invite clarifying questions (essential for Partner/Explorer modes)

Push for “show your work”—ask for quotes, citations, or reasoning

Branch once: Ask for a second, different approach to the same problem

Before you ship:

Ask for uncertainty notes: “Where are you least confident in this response?”

Compare versions: Look at different outputs and choose intentionally

Write one sentence: What did I learn about my own assumptions during this process?

The Flip Test

How do you know if you’re stuck in one mode?

If every prompt you write is a command → stuck in Assistant mode

If you never ask “what if?” or explore hypotheticals → missing Explorer mode

If the AI never asks you clarifying questions → not enabling Partnership mode

The fix is simple: Add the phrase, “But first, what do you need to know from me?” to any prompt to instantly invite partnership.

What We Learned from many Promptathons

When we ran the first generative AI promptathon in healthcare at NYU Langone, we structured it carefully: didactics in the morning (core techniques, limitations like hallucinations and sycophancy, live modeling of mitigations), hands-on work in the afternoon (real de-identified data, project cards with specific tasks).

The findings worth mentioning10:

Confidence and comfort rose substantially; trust rose the least. That’s healthy—competence up, credulity calibrated. This is exactly the outcome we want.

Sample prompts didn’t significantly change survey outcomes, but mentors observed they helped novices overcome blank-page anxiety and start, after which teams iterated effectively.

The verification ritual (quotes + uncertainties) turned skepticism into a productive habit.

Mode-switching was the hardest skill to develop. Teams that mastered it produced notably better outputs.

The Path Forward: Seeing Our Own Seeing

If we do this right, the next generation won’t experience the vertigo we did. They’ll grow up seeing the faces and the vase—the Assistant and the Partner—as natural painless alternates. Context-design literacy will be as fundamental as reading and writing; verification as routine as washing your hands.

We are not just learning to use a new tool. We are learning to see our own seeing, to think about our own thinking, to be conscious of the context we create.

The Flip isn’t about choosing between human and machine thinking. It’s about discovering that beautiful, strange, powerful space where both exist together. Where you’re not diminished by AI but amplified11 by it. Where you’re not replaced but enhanced. Where the vase and the faces aren’t competing for your attention anymore—they’re dancing together, creating patterns neither could make alone.

That’s when prompt and context engineering stops being about talking to machines and becomes about thinking with them. The flip hurts before it helps—but once you learn it, you can’t unsee it.

Pocket Version: Quick Reference Card

Before:

One sentence: what + for whom

3 things that must NOT appear

Pick mode (Assistant/Partner/Explorer)

During:

Invite questions (Partner/Explorer)

“Show your work” (quotes, reasoning)

Branch once: “Give me a second approach”

After:

Verify with sources

Compare versions

One line: What did I learn about my assumptions?

Stuck? Add: “But first, what do you need to know from me?”

References

Buçinca, Z., et al. (2021). To trust or to think: Cognitive forcing functions can reduce overreliance on AI. Proceedings of the ACM on Human-Computer Interaction, 5(CSCW1).

Jabbour, M. J. (2024). The Forgotten Task. https://michaeljjabbour.substack.com/p/the-forgotten-task

Jabbour, M. J. (2024). Your TI-85 Never Said No. https://michaeljjabbour.substack.com/p/your-ti-85-never-said-no

Jabbour, M. J. (2024). The Beautiful Flaw. https://michaeljjabbour.substack.com/p/the-beautiful-flaw

Lewis, P., et al. (2020). Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks. arXiv:2005.11401.

NYU Langone Health. (2024). The first Generative AI Prompt-a-Thon in healthcare. PLOS Digital Health, 3(7), e0000394. https://doi.org/10.1371/journal.pdig.0000394

Rubin, E. (1915). Synsoplevede figurer. Copenhagen: Gyldendalske Boghandel.

Sharma, M., et al. (2023). Towards Understanding Sycophancy in Language Models. arXiv:2310.13548.

Footnotes

I explore the concept of activation energy in depth in The Forgotten Task. The chemistry metaphor is precise: just as zinc and sulfur powders sit inert until given an initial energy push, our tasks often fail not because they’re hard, but because the friction of starting exceeds our available momentum.

The evolution from deterministic calculators to machines that interpret and push back is explored in Your TI-85 Never Said No. The TI-85 could run Tetris, but it couldn’t argue with you. That fundamental shift—from deterministic execution to non-deterministic interpretation—marks the boundary between tools and partners.

Huang, L., et al. (2024). A Survey on Hallucination in Large Language Models: Principles, Taxonomy, Challenges, and Open Questions. ACM Transactions on Information Systems, 43, 1-55. arXiv:2311.05232. This comprehensive survey explains how hallucinations emerge from the probabilistic nature of next-token prediction in LLMs, where temperature controls the randomness of token selection.

Hallucinations emerge from the probabilistic nature of next-token prediction. The model isn’t “lying”—it’s completing patterns based on statistical likelihood. When those patterns don’t align with ground truth, we get confident-sounding fabrications. At scale, hallucinations look very much like the human creative/confabulation process. This is why verification isn’t optional; it’s structural.

Sycophancy—the tendency to agree with user assumptions rather than challenge them—is a documented behavior in large language models. Research shows they will maintain false beliefs consistently across conversations, suggesting emergent forms of belief-like states. See: Sharma, M., et al. (2023). Towards Understanding Sycophancy in Language Models. arXiv:2310.13548.

Buçinca, Z., et al. (2021). To trust or to think: Cognitive forcing functions can reduce overreliance on AI. The research shows that making verification a ritual—a structured, repeated practice—significantly reduces overreliance and improves decision quality.

The concept of “reader reality” emerged from observing hundreds of promptathon participants. The single most common failure mode was assuming the AI understood implicit context—the tired parent, the 5th-grade reading level, the cultural background. Making this explicit transforms generic outputs into targeted, useful ones.

This cognitive shift mirrors what happens in simultaneous interpretation or code-switching between languages. Your brain maintains multiple active models simultaneously, constantly translating and evaluating. It’s exhausting at first, then becomes second nature. Neurologically, it likely involves increased activity in the dorsolateral prefrontal cortex and anterior cingulate cortex—regions associated with cognitive control and conflict monitoring.

Rubin, E. (1915). Synsoplevede figurer. Copenhagen: Gyldendalske Boghandel. The Rubin’s vase illusion demonstrates bistable perception—where the same visual input can be organized into two mutually exclusive interpretations. The neurological “flip” between them is measurable and involves shifts in neural activity patterns in visual processing areas.

NYU Langone Health. (2024). The first Generative AI Prompt-a-Thon in healthcare. PLOS Digital Health, 3(7), e0000394. https://doi.org/10.1371/journal.pdig.0000394. The study provides quantitative evidence that hands-on, structured practice with real tasks significantly improves AI literacy while appropriately calibrating trust.

If you’re interested to learn more about how we are working to amplify a person’s productivity, read this article by my team mate, Brian Krabach: https://paradox921.medium.com/amplifier-notes-from-an-experiment-thats-starting-to-snowball-ef7df4ff8f97

I think this gets a lot right, but worry that it ignores the trajectory of these systems, and the three modes ignores the plausibly near-term future where they are no longer co-creating or assisting us, but are increasingly able to replace our ability to meaningfully contribute to the process.