The Geometry of Crisis

When parallel lines finally meet — The Flip Part 2

Note: I dropped in some of the references I uncovered in researching this topic. No need to get bogged down with them, focus on the flip! If you haven’t read The Flip Part 1, here it is.

The Architecture of Reframing in Human and Machine Intelligence

For many years, a phrase haunted my notebook like an unfinished equation: “Points of crisis become points of convergence… and more recently, I’ve added… enabling the acceleration and amplification of human potential.”

It crystallized this week in a conversation with a close friend, both of us wrestling with the relentless pace of AI transformation. We’re not just trying to keep up with the technology—we’re trying to maintain meaningful lives for our families, support our colleagues through massive change, and somehow find solid ground while the world reshapes itself beneath our feet. It’s heavy. It’s overwhelming. Some days it feels impossible.

But here’s what we discovered in that conversation: when you actually take that first step, then another, something remarkable happens. The crisis doesn’t just converge into coherence—it can become a point of acceleration. What started as breakdown transforms into breakthrough, and suddenly you’re not just surviving the change, you’re surfing it. You’re not just adapting, you’re evolving. And when you witness this transformation in others—watching them flip from overwhelm to mastery—it’s nothing short of miraculous.

That pattern keeps appearing everywhere I look—in surgical teams responding to unexpected complications, in code reviews that expose fundamental design flaws, in classrooms where confusion suddenly crystallizes into understanding, in AI conversations that leap from mechanical repetition to something approaching insight. Every time, the same arc: crisis → convergence → acceleration.

Crisis, I’ve come to understand, has a shape—a geometry that repeats across every scale of intelligence. It isn’t system failure. It’s context saturation—the moment when your current frame can no longer contain what reality demands next.

1. The Neuroscience of Collapse

Your brain runs on prediction1. Every waking moment, it generates a model of what should happen next—from the weight of your coffee cup to the meaning of these words. This predictive processing happens across multiple neural networks (among other regions) simultaneously:

The prefrontal cortex (PFC) maintains your cognitive context—what task you’re doing, what rules apply, what’s relevant2

The orbitofrontal cortex (OFC) tracks your “latent state”—an invisible map of what kind of situation you’re in3

The hippocampus bridges present and past, pulling relevant schemas from memory4

The anterior cingulate cortex (ACC) monitors for conflicts between predictions and reality5

It’s an exquisite dance of anticipation and adjustment. Until it isn’t.

When predictions fail catastrophically—when the model can’t bend enough to fit reality—the system experiences what neuroscientists call a prediction error cascade6. Norepinephrine (Phasic LC‑NE) responses can loosen entrenched patterns and increase flexibility. The ACC lights up like a fire alarm. Cognitive flexibility increases dramatically. The brain literally becomes more plastic, more capable of fundamental reorganization7.

This isn’t breakdown—it’s breakthrough preparation.

The Reset Protocol

Here’s illustrative timing (orders of magnitude) of what happens in your brain during a context crisis:

Detection (50-150ms): The ACC detects prediction violation8

Cascade (200-500ms): Error signals propagate through the network

Release (500-1000ms): Norepinephrine loosens existing patterns9

Exploration (1-3s): The brain samples alternative interpretations

Convergence (3-5s): A new stable state emerges10

Note: This biological reset protocol remarkably parallels the “agency protocols” many of us have developed intuitively—those personal practices we use to regain control when everything feels chaotic. The brain’s natural crisis response is teaching us how to design better human-AI collaboration patterns.

This same sequence appears in how transformer models process conflicting information11, how organizations respond to disruption12, and how scientific paradigms shift13. The pattern is fractal—it repeats at every scale of intelligence.

2. Context poison: When Frames Become Prisons

“Context poisoning isn’t a bug — it’s entropy. Meaning drifts, contradictions breed, and coherence decays. The cure isn’t more context; it’s disciplined subtraction.”— Brian Krabach (LinkedIn | Medium)

Context poison is the silent killer of both human and artificial intelligence. It’s what happens when the frame that once clarified begins to distort the signal, when the scaffolding of meaning becomes its own interference.

In Humans

We experience context poison as:

Confirmation bias: Seeing only what fits our existing frame14

Functional fixedness: Unable to see objects or ideas beyond their usual use15

Expertise paradox: When deep knowledge becomes a barrier to fresh insight16

In Organizations

Companies experience it as:

Technical debt: Old architectural decisions constraining new possibilities

Cultural calcification: “How we’ve always done it” blocking innovation

Strategic myopia: Previous success patterns preventing adaptation17

In AI Systems

Models experience it as:

Context window saturation: Too much irrelevant information drowning signal

Instruction collision: Conflicting directives creating incoherent behavior

Semantic drift: Meanings shifting across long conversations18

But beneath all three domains — human, organizational, and machine — the mechanism is the same: residue.

Partial truths, deprecated files, and unexamined fragments accumulate until meaning begins to collapse under their own weight. As Brian noted, “The model isn’t failing to think; it’s thinking inside residue.” The danger isn’t noise — it’s the slow inheritance of distortion.

The fix isn’t clever—it’s structural. Make deletion safe and expected. Rewrite the present instead of patching the past, and keep a single, living source of truth. If history must remain, isolate it — quarantine the archive so it teaches without interfering. The living system must stay lean enough to learn.

The archive is not the workspace. Maintenance isn’t housekeeping; it’s cognition. Alignment is maintenance — the practice of keeping coherence alive by pruning what no longer serves.

The antidote isn’t more information — it’s better framing.

If crisis is what happens when a frame collapses, context poison is what happens when it refuses to. Every system decays toward noise unless it learns to let go. Clarity isn’t what you add; it’s what survives deletion.

“If you don’t design for deletion, you design for drift.”

“One concept. One location. Everything else is rot.”

“Append-only documentation is a denial-of-service on clarity.”

“Alignment is maintenance.”

3. The Shape of Transformation

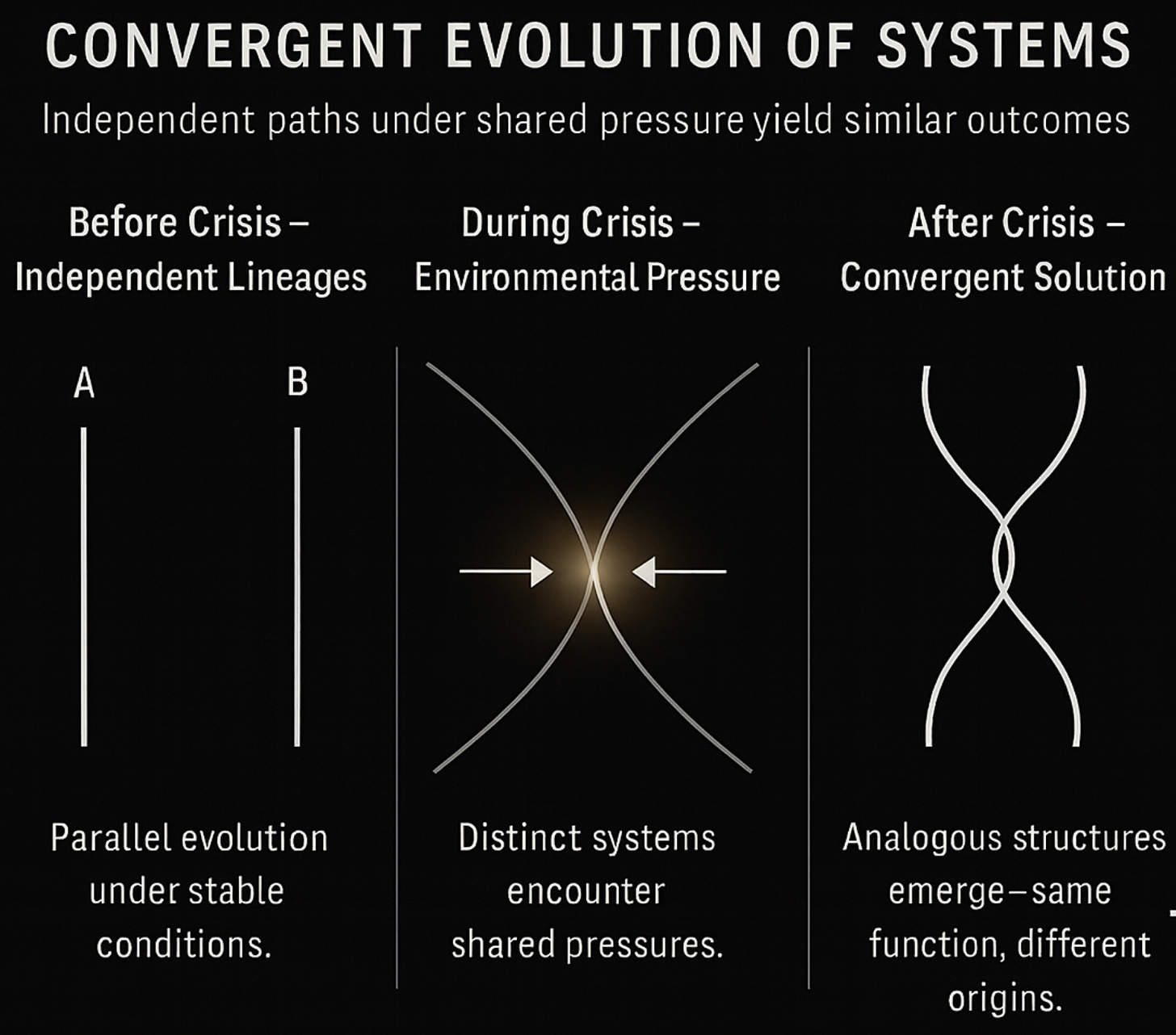

Crisis has shape. Watch how any complex system responds to pressure, and you’ll see the same geometric transformation:

In ecology, this is called convergent evolution—unrelated species developing similar solutions under similar pressures19. In organizations, it’s crisis-driven innovation—silos dissolving when survival is at stake20. In consciousness, it’s the moment scattered thoughts crystallize into insight21.

The geometry is consistent: isolation → collision → integration.

Real-World Convergence Patterns

Space Mission Crisis: Apollo 13’s explosion forced unprecedented convergence between flight control, engineering, and astronaut crews. Teams that normally worked in sequence suddenly had to think as one organism. The crisis didn’t just solve the immediate problem—it revolutionized NASA’s approach to mission planning22.

Technical Breakthrough: AlphaGo’s breakthrough came from combining deep policy/value networks with Monte Carlo Tree Search23.

Personal Transformation: In my own practice transitioning from pure clinical work to AI-augmented healthcare, the crisis came when I realized my medical training was both essential and insufficient. The convergence of clinical intuition with computational thinking didn’t replace either—it created a third way of seeing.

4. Context Craft: The New Literacy

If crisis reveals the brittleness of our frames, then context craft is the practice of building resilient, adaptive meaning-structures.

The Seven Pillars of Context Craft

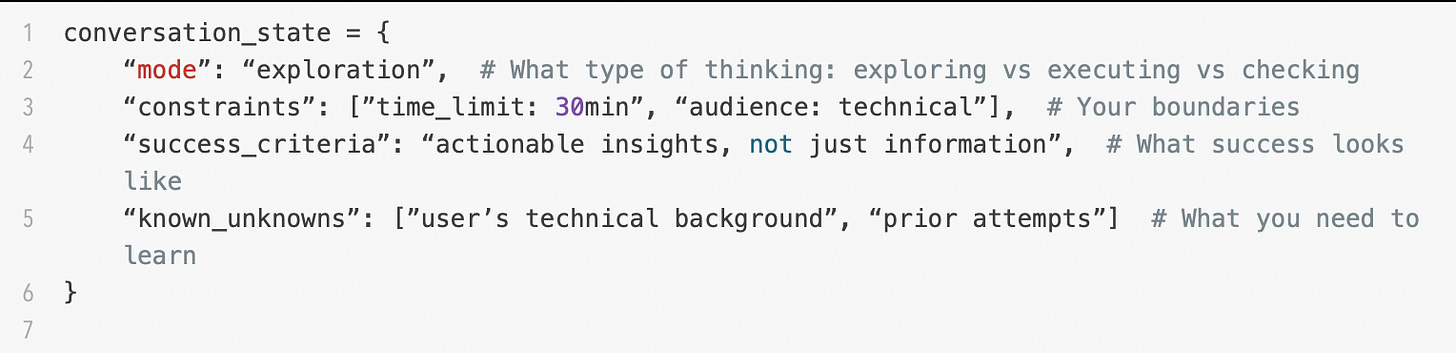

1. State Declaration

Before any interaction—human or machine—explicitly declare your state.

For non-technical readers: The code below is like a recipe card that tells the AI exactly what kind of conversation you want. Don’t worry about the syntax—focus on the concepts. You can even copy this code image and ask an AI: “Translate this code into plain non-technical language” to see what it means.

In plain language: This is like starting a meeting by saying “We have 30 minutes to explore options, our audience is technical, we need actionable insights, and we need to figure out what’s already been tried.”

This mirrors how the PFC (prefrontal cortex—your brain’s CEO) sets task parameters before engaging working memory24.

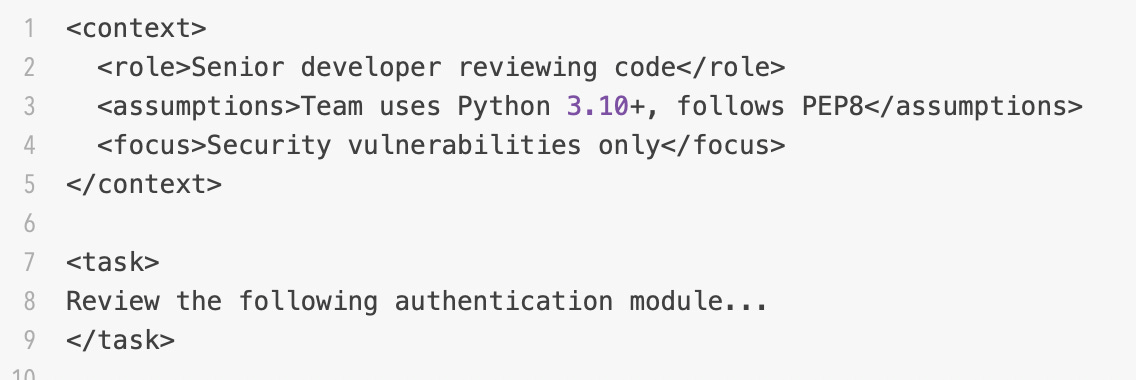

2. Semantic Boundaries

Use explicit delimiters to prevent context bleed—like putting different ingredients in separate containers so flavors don’t mix incorrectly.

In plain language: The XML tags (those angle brackets) work like labeled folders. They tell the AI “this section is context, this section is the actual task.” It’s like using dividers in a binder—everything stays in its proper place.

Anthropic recommends XML‑style boundaries to improve parsing and output quality25.

3. Progressive Disclosure

Don’t dump everything at once. Layer context like an onion:

Layer 1: Core task (50 tokens*)

Layer 2: Constraints and requirements (150 tokens)

Layer 3: Examples and edge cases (300 tokens)

Layer 4: Full background only if needed (500+ tokens)

*Tokens are like words—roughly 1 token = 0.75 words. So 50 tokens ≈ 37 words.

This aligns with evidence that working memory focuses on roughly four “chunks” at a time—but the token bands here are practical heuristics, not fixed limits.26.

4. Uncertainty Acknowledgment

Build uncertainty handling into the context itself:I’m providing X, but I’m uncertain about Y.

If you need Y to properly address this, please ask.

If you must make assumptions about Y, state them explicitly.

This prevents the hallucination cascade that occurs when models fill gaps without acknowledgment.

5. Context Refresh Protocols

Every 3-5 exchanges in a long conversation, explicitly refresh:Let me summarize where we are:

- Started with: [original goal]

- Discovered: [key findings]

- Current focus: [present task]

- Next step: [proposed action]

Is this accurate? What am I missing?

This prevents semantic drift and maintains coherence.

6. Feedback Loops

Build verification into the context:

After you provide your response:

1. List your key assumptions

2. Rate confidence (1-10) on each major claim

3. Identify what additional information would most improve accuracy

This creates what researchers call “epistemic vigilance”—awareness of knowledge boundaries27.

7. Context Hygiene

Regular maintenance practices:

Prune irrelevant information every few exchanges

Consolidate repeated patterns into single instructions

Refactor when context becomes unwieldy

Archive successful patterns for reuse

5. The Practice: Context Engineering Exercises

Exercise 1: The Context Audit

Take a failed interaction (human or AI) and audit its context:

What was explicitly stated?

What was assumed but not stated?

Where did meanings diverge?

What context would have prevented the failure?

Exercise 2: The Flip Practice

Choose a problem you’re stuck on:

Write your current framing (50 words)

Identify three assumptions in that framing

Invert each assumption

Rewrite the problem from the inverted perspective

Find the synthesis between original and inverted

Exercise 3: Context Crafting Sprint

For your next AI interaction:

Before: Write context in three layers (core/constraints/examples)

During: Refresh context every 3 exchanges

After: Extract one reusable context pattern

Exercise 4: The Convergence Map

Identify a crisis in your work/life:

Map the parallel tracks (what’s operating independently)

Identify the pressure point (what’s forcing convergence)

Visualize the new configuration

Design the transition path

6. From Content to Context: The Great Inversion

Bill Gates famously coined the “Content is king” phrase which captured the early internet era perfectly. But we’re living through another inversion, one that started before generative AI took hold28:

The Old Paradigm:

Scarce: Information

Valuable: Having the right answer

Power: Controlling distribution

Expertise: Knowing facts

The New Paradigm:

Scarce: Attention and coherence

Valuable: Asking the right question

Power: Creating meaning

Expertise: Managing context

This isn’t just a shift—it’s a flip. Like the moment when humanity realized the Earth orbits the Sun, not vice versa. The information isn’t changing; our relationship to it is.

The Context Kingdom

If content is king, context is the kingdom—the entire ecosystem that gives the king meaning and power. A king without a kingdom is just someone with a fancy hat.

Consider how the same information transforms across contexts:

“Temperature:

77 °F” → Weather: pleasant;

100.4 °F → Body: fever;

194 °F → CPU: overheating.“Growth: 50%”

→ Startup: struggling

→ Cancer: aggressive

→ Child: healthy

→ Economy: overheating

The content stays constant. The context determines everything.

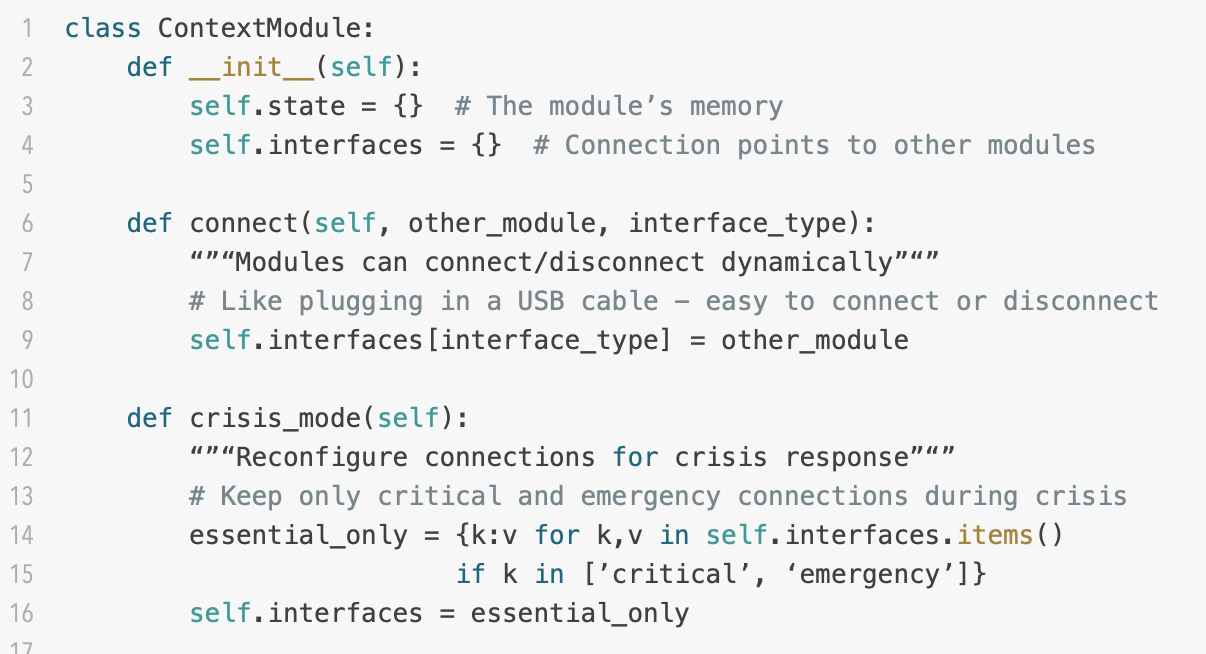

7. Crisis Architecture: Designing for Breakdown

Instead of avoiding crisis, what if we designed for it?

Principles of Crisis-Ready Systems

1. Loose Coupling with Clear Interfaces

Systems that can reconfigure quickly have parts that connect cleanly.

For non-technical readers: The code below describes a system that can plug and unplug its parts like LEGO blocks. During a crisis, it keeps only the essential connections and temporarily disconnects everything else.

In plain language: Imagine your phone during emergency mode—it shuts down all non-essential apps to preserve battery for critical functions. This code does the same thing for AI systems.

2. Redundant Pathways

Multiple ways to achieve the same goal:

Primary: Optimal path under normal conditions

Secondary: Backup with acceptable degradation

Emergency: Minimum viable function

This mirrors how the brain maintains multiple routes between regions29.

3. Crisis Triggers and Protocols

Explicit detection and response patterns.

For non-technical readers: The YAML code below is like an emergency response checklist. YAML is just a way to write structured lists that computers can read—think of it as a very organized outline.

crisis_detection:

triggers: # Warning signs that context is breaking

- prediction_error > threshold # Too many surprises

- coherence_score < minimum # Things stop making sense

- conflict_count > maximum # Too many contradictions

response:

immediate: # First 30 seconds

- pause_normal_operation

- activate_emergency_context

- increase_monitoring

assessment: # Next 2-5 minutes

- identify_failure_point

- map_available_resources

- generate_options

recovery: # Following hours/days

- implement_minimum_viable

- gradually_restore_function

- document_lessons

In plain language: This is exactly like a fire evacuation plan—if smoke is detected (trigger), then immediately sound alarm and evacuate (immediate response), then assess the situation (assessment), then carefully re-enter when safe (recovery).

8. The Biological Blueprint

When context collapses, biological and artificial systems follow remarkably similar recovery paths:

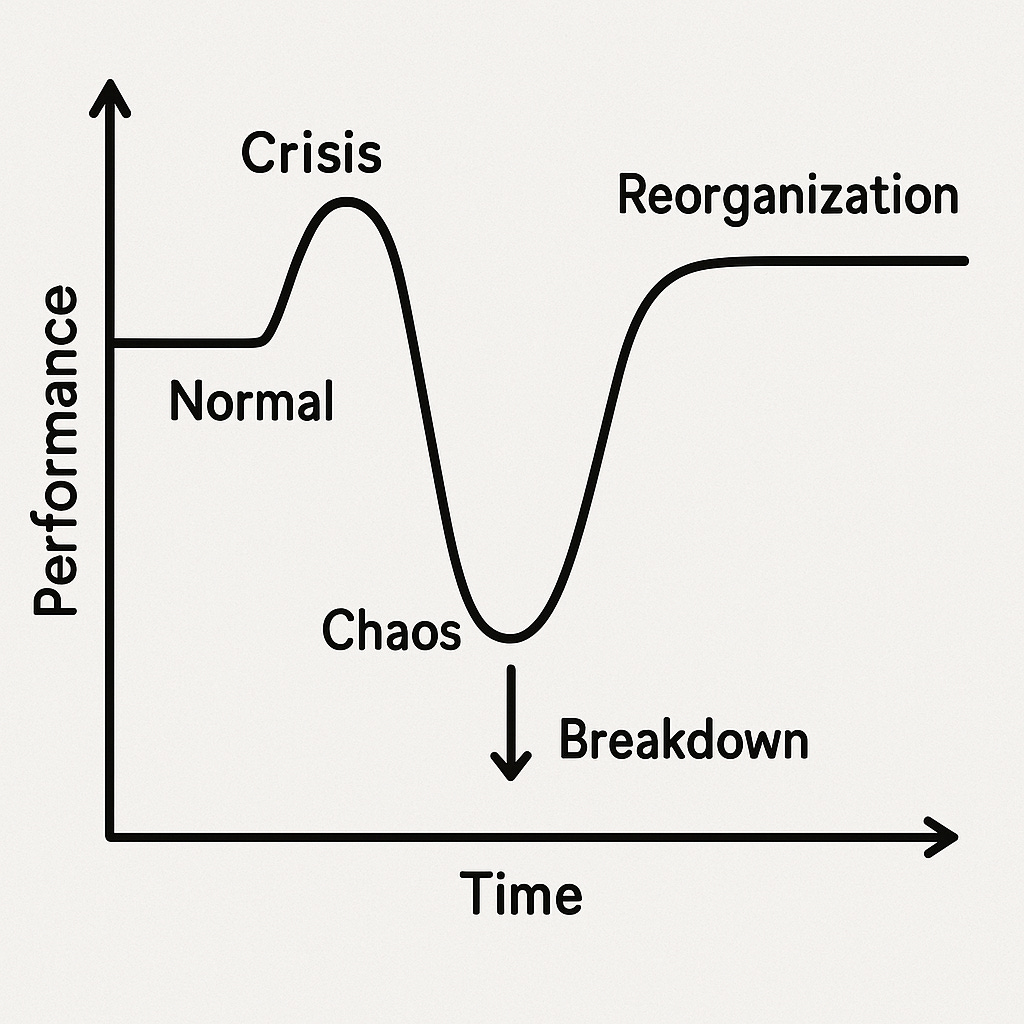

The Universal Crisis Response Curve

This pattern appears in:

Neuroscience: Neural reorganization after stroke30

Ecology: Ecosystem recovery after disturbance31

Psychology: Post-traumatic growth32

Organizations: Innovation following disruption33

AI Systems: Model adaptation to distribution shift34

The Four-Phase Protocol

Collapse (Seconds to Minutes)

Old patterns fail

Uncertainty spikes

System becomes fluid

Convergence (Minutes to Hours)

Disconnected elements interact

New connections form

Possibilities multiply

Crystallization (Hours to Days)

New pattern emerges

Structure stabilizes

Function returns

Consolidation (Days to Weeks)

Pattern reinforcement

Efficiency optimization

Memory formation

9. Case Studies in Context Crisis

Case 1: The Operating Room

Crisis: Unexpected arterial bleeding during routine surgery

Context Collapse: Standard procedure no longer applicable

Convergence: Surgeon, anesthesiologist, nurses synchronize without verbal coordination

New Context: Implicit shared model of emergency response

Lesson: Crisis can trigger collective intelligence exceeding individual capabilities35

Case 2: The GPT Conversation

Crisis: Model provides contradictory information across exchanges

Context Collapse: Instruction set has become internally inconsistent

Convergence: User identifies and removes conflicting directives

New Context: Cleaner, hierarchical instruction structure

Lesson: Context debugging is as important as prompt engineering36

Case 3: The Startup Pivot

Crisis: Product-market fit failure after 18 months

Context Collapse: Original vision no longer viable

Convergence: Customer complaints reveal unexpected use case

New Context: Complete business model transformation

Lesson: Crisis feedback contains innovation seeds37

10. Tools for Context Management

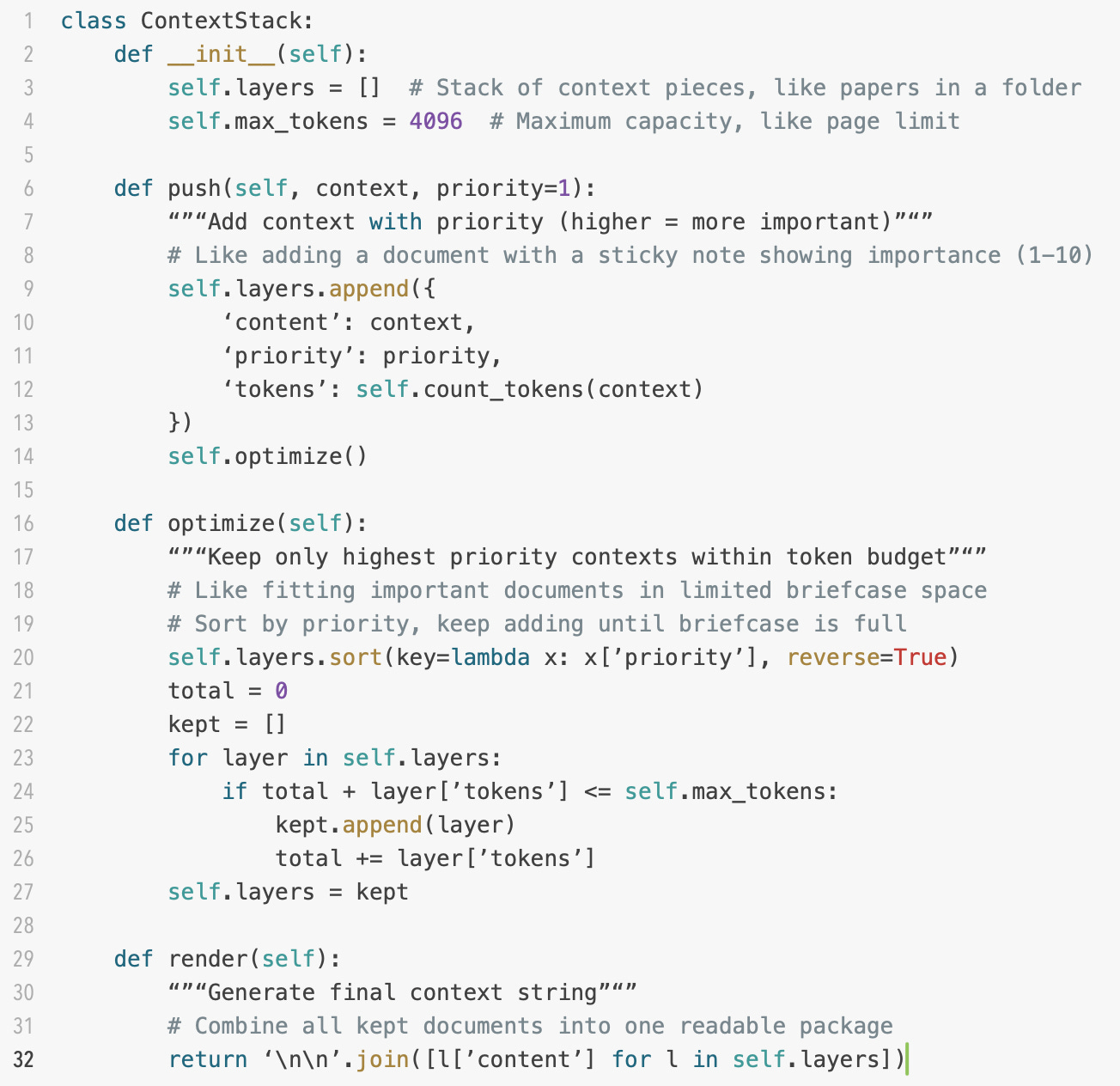

The Context Stack

A practical framework for managing context layers.

Think of the Context Stack like a smart spotlight, not a storage bin. At any given moment, an AI (or a person) can only “see” part of everything it knows — the slice that fits in its short-term focus. Because that space is limited, it has to spend its attention wisely, choosing what to bring into view.

Good context engineering is about picking the mix of details that are both useful and different enough to keep perspective fresh. That balance keeps the system from “overfitting” — getting trapped in one narrow view — and helps it respond with more creativity and relevance.

For non-technical readers: Think of this like organizing a briefcase where only the most important documents fit. The code below creates a smart filing system that automatically keeps the highest-priority information when space is limited.

In plain language: This is like having a smart assistant who, when your briefcase gets too full, automatically keeps only your most important documents and removes less critical ones. Priority 10 items (critical) stay, priority 1 items (nice-to-have) get removed first.

The Context Refactoring Checklist

Before each major interaction:

[ ] Remove: What information is no longer relevant?

[ ] Consolidate: What patterns appear repeatedly?

[ ] Clarify: What assumptions need stating?

[ ] Structure: Are boundaries clear between sections?

[ ] Verify: Do instructions conflict anywhere?

[ ] Prioritize: What’s essential vs nice-to-have?

[ ] Test: Can you predict likely failure modes?

11. The Meta-Context: Systems That Learn Their Own Patterns

The next frontier isn’t just managing context—it’s creating systems that learn their own context patterns. This is where we transition from context craft to context architecture.

Self-Organizing Context

Imagine contexts that:

Monitor their own effectiveness

Identify their own breakdown patterns

Evolve their own optimization strategies

Teach other contexts their learnings

This isn’t science fiction. It’s emerging in:

Adaptive RAG systems that learn which retrievals work38

Meta-learning models that learn how to learn39

Evolutionary architectures that modify their own structure40

The Context Learning Loop

Context Performance → Pattern Recognition → Rule Extraction → Context Modification → Performance Measurement → [Repeat]

Each cycle makes the context more robust, more adaptive, more intelligent.

12. Practical Wisdom: What Three Years of Context Engineering Taught Me

The Paradoxes

The more context you provide, the less the model may understand

Solution: Hierarchical context with progressive disclosure

The clearer your instructions, the more rigid the output

Solution: Balance specificity with flexibility zones

The better your context, the more fragile it becomes

Solution: Build in redundancy and graceful degradation

The Principles

Start with state: Always declare what mode you’re in

Embrace boundaries: Clear delimiters prevent context bleed

Design for debugging: Make context inspection easy

Plan for breakdown: Crisis protocols should be pre-built

Archive successes: Reusable context patterns compound value

The Practices

Daily: Context hygiene (pruning, clarifying)

Weekly: Pattern extraction (what worked, what didn’t)

Monthly: Framework evolution (updating base templates)

Quarterly: Paradigm questioning (is our frame still valid?)

Field Guide: Context Craft Quick Reference

Before the Conversation

State Declaration

Mode: [Assistant / Partner / Explorer]

Constraints: [time / format / scope]

Success: [specific outcomes]

Unknowns: [what you need to discover]

During the Exchange

Every 3–5 Turns

Summarize progress

Verify understanding

Prune irrelevant context

Check for drift

After the Interaction

Pattern Extraction

What context worked?

What caused confusion?

What’s reusable?

What needs refinement?

Crisis Response

When Coherence Breaks

Pause and identify the failure point

Roll back to the last stable context

Rebuild with clearer boundaries

Test with simple verification

Document the breakdown pattern

The Bridge to Part III

We’ve explored how crisis transforms context, how context shapes meaning, and how to craft resilient cognitive frames. But individual context mastery is just the beginning.

The next challenge is collective context—how do we maintain coherence not just within one mind (biological or artificial) but across many? How do we build systems where the conversation itself learns, where context evolves through interaction, where meaning emerges from the network rather than any single node?

This is the shift from context as craft to context as architecture. From managing frames to designing fields. From individual coherence to collective intelligence.

And here’s what we’re only beginning to understand: this acceleration isn’t just personal—it amplifies outward. When one person makes the flip from crisis to convergence, they become a catalyst for others. The acceleration compounds. Human potential doesn’t just adapt; it multiplies.

In Part III, we’ll explore how this amplification works—not just within individual minds but across networks of human and machine intelligence. How the acceleration that begins in crisis becomes the amplifier effect that transforms entire systems.

The question becomes: If crisis reveals the hidden architecture of meaning, what happens when we deliberately design that architecture? What becomes possible when we stop managing context and start composing with it?

That’s where The Flip completes: Not in choosing between human and machine intelligence, but in recognizing that the space between them—the context layer—is where the future is being written.

Next: Part III — The Amplifier Effect

How contexts compose, how conversations learn, and why the space between human and machine intelligence is where the future emerges.

Author’s Note

This essay emerged from years of working at the intersection of medicine, education, and artificial intelligence, watching both humans and machines struggle with the same fundamental challenge: how to maintain coherence when contexts collapse. The patterns described here aren’t theoretical—they’re drawn from thousands of hours of direct observation, failed experiments, and unexpected breakthroughs.

For practitioners wanting to go deeper, I recommend starting with the exercises in Section 5, then gradually building your own context management protocols. The learning curve is steep, but the view from the other side is transformative.

Remember: The flip isn’t about choosing between human and machine intelligence. It’s about recognizing that crisis—in all its forms—is just intelligence remembering how to grow.

References & Footnotes

Clark, A. (2013). “Whatever next? Predictive brains, situated agents, and the future of cognitive science.” Behavioral and Brain Sciences, 36(3), 181-204. DOI: 10.1017/S0140525X12000477. Open Access

Miller, E. K., & Cohen, J. D. (2001). “An integrative theory of prefrontal cortex function.” Annual Review of Neuroscience, 24, 167-202. Open Access PDF

Schuck, N. W., et al. (2016). “Human orbitofrontal cortex represents a cognitive map of state space.” Neuron, 91(6), 1402-1412. Open Access

McClelland, J. L., McNaughton, B. L., & O’Reilly, R. C. (1995). “Why there are complementary learning systems in the hippocampus and neocortex.” Psychological Review, 102(3), 419-457. Open Access PDF

Botvinick, M. M., Cohen, J. D., & Carter, C. S. (2004). “Conflict monitoring and anterior cingulate cortex.” Trends in Cognitive Sciences, 8(12), 539-546. ResearchGate

Friston, K. (2010). “The free-energy principle: a unified brain theory?” Nature Reviews Neuroscience, 11(2), 127-138. PDF

Yu, A. J., & Dayan, P. (2005). “Uncertainty, neuromodulation, and attention.” Neuron, 46(4), 681-692. Open Access

Gehring, W. J., et al. (1993). “A neural system for error detection and compensation.” Psychological Science, 4(6), 385-390. ResearchGate

Aston-Jones, G., & Cohen, J. D. (2005). “An integrative theory of locus coeruleus-norepinephrine function.” Annual Review of Neuroscience, 28, 403-450. PDF

Bassett, D. S., et al. (2011). “Dynamic reconfiguration of human brain networks during learning.” PNAS, 108(18), 7641-7646. Open Access

Weick, K. E. (1993). “The collapse of sensemaking in organizations: The Mann Gulch disaster.” Administrative Science Quarterly, 38(4), 628-652. PDF

Nickerson, R. S. (1998). “Confirmation bias: A ubiquitous phenomenon.” Review of General Psychology, 2(2), 175-220. ResearchGate

Chi, M. T., Glaser, R., & Farr, M. J. (Eds.). (1988). The Nature of Expertise. Lawrence Erlbaum. Book

Christensen, C. (1997). The Innovator’s Dilemma. Harvard Business Review Press. Summary PDF

Anthropic. (2024). “Use XML tags to structure your prompts.” Technical Report. Anthropic Docs

Conway Morris, S. (2003). Life’s Solution: Inevitable Humans in a Lonely Universe. Cambridge University Press. Cambridge

Kounios, J., & Beeman, M. (2009). “The Aha! moment: The cognitive neuroscience of insight.” Current Directions in Psychological Science, 18(4), 210-216. PDF

Kranz, G. (2000). Failure Is Not an Option. Simon & Schuster. NASA History

Silver, D., et al. (2016). “Mastering the game of Go with deep neural networks and tree search.” Nature, 529(7587), 484-489. PDF

Badre, D., & Wagner, A. D. (2007). “Left ventrolateral prefrontal cortex and the cognitive control of memory.” Neuropsychologia, 45(13), 2883-2901. PMC

Anthropic. (2024). “Effective Context Engineering for Claude.” Documentation

Anthropic. (2024). “Long context prompting tips.” Claude Docs.

Cowan, N. (2001). “The magical number 4 in short-term memory.” Behavioral and Brain Sciences, 24(1), 87-114. ResearchGate

Bullmore, E., & Sporns, O. (2009). “Complex brain networks.” Nature Reviews Neuroscience, 10(3), 186-198. PDF

Murphy, T. H., & Corbett, D. (2009). “Plasticity during stroke recovery.” Nature Reviews Neuroscience, 10(12), 861-872. PMC

Holling, C. S. (1973). “Resilience and stability of ecological systems.” Annual Review of Ecology and Systematics, 4, 1-23. JSTOR

Tedeschi, R. G., & Calhoun, L. G. (2004). “Posttraumatic growth.” Psychological Inquiry, 15(1), 1-18. PDF

Gersick, C. J. (1991). “Revolutionary change theories.” Academy of Management Review, 16(1), 10-36. JSTOR

Kirkpatrick, J., et al. (2017). “Overcoming catastrophic forgetting in neural networks.” PNAS, 114(13), 3521-3526. Open Access

Manser, T. (2009). “Teamwork and patient safety in dynamic domains.” Acta Anaesthesiologica Scandinavica, 53(2), 143-151. Wiley

Ries, E. (2011). The Lean Startup. Crown Business. PDF Summary