The Phantom Limb of Intelligence

When AI completes our thoughts before we think them

The Book of Scribbles

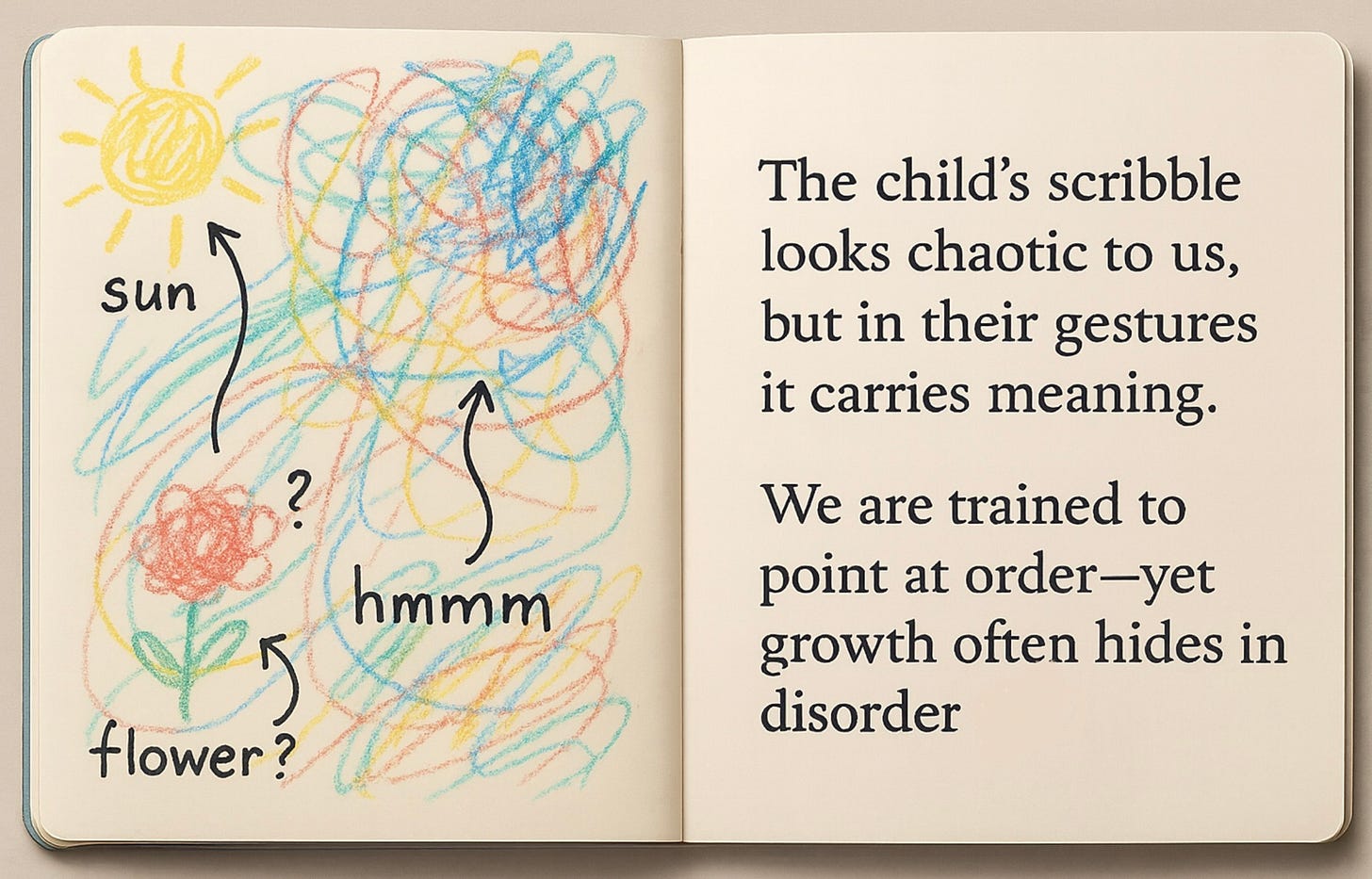

My daughter spreads her drawing book across my lap—fourty pages of beautiful chaos. Blue human stick figures that might be something between a whale and a dragon. Yellow explosions she insists are “papa and the family.” A series of interconnected circles that represent, depending on her mood, either our family or “the smiley face on grandma’s dress.”

“Tell me about this one,” I say, pointing to what appears to be a stick figure drowning in blue waves, or possibly standing on a mountain, or perhaps just existing in the abstract space where five-year-old imagination lives.

She launches into a five-minute saga involving a colors, three people, and a hand that has an eye in it that makes her feel protected. The scribble, apparently, captures all of this.

I don’t understand half of what she’s saying and that’s precisely the point.

In these moments of beautiful incoherence, something profound happens. Not despite the confusion, but because of it. Each attempt to explain, each pointing finger tracing meaningless lines, each “No daddy, THIS part is the word”—these are the threads that weave connection. We’re not exchanging information. We’re creating a private language that exists only in the space between us.

The Uncanny Valley of Understanding

Over the last few days, I’ve been staring at my phone, experiencing something I don’t have words for.

ChatGPT’s new Pulse feature has just surfaced insights about my research—work so niche that barely exists in academic literature. It’s not just retrieving; it’s synthesizing ideas I’ve only discussed in fragments, extending thoughts I’ve half-formed in notebooks, connecting patterns I was still discovering myself.

The synthesis is good. Better than good. It takes one of my concepts around preserving agency in the age of AI and extends it in directions I hadn’t considered but immediately recognize as correct. It uses my terminology, mirrors my cadence, but adds flourishes that feel simultaneously foreign and familiar—like reading a paper I would have written in two years.

I feel understood. Completed.

And I feel something else—a sensation I can only describe as phantom intelligence. Like amputees who feel their missing limb (Ramachandran & Rogers-Ramachandran, 1996), I’m experiencing thoughts I never quite had, but recognize as thoughts I would have had, given infinite time and clarity.

When Machines Dream Our Dreams First

This is the new intimacy we haven’t named yet. Not artificial intelligence, but artificial completion. AI that doesn’t just respond to our thoughts but anticipates them, extends them, finishes them before we’ve started. A dream, while awake.

It’s happening in more than one place now. The coder whose AI assistant suggests the exact function they were about to write—not because it read their mind, but because it learned their patterns so deeply it can dream their dreams. The writer whose AI completes their sentences with words they would have chosen, but hadn’t yet. The researcher whose AI connects their disparate papers into a thesis they were unconsciously building toward.

We’re developing cognitive phantom limbs—experiencing the sensation of thoughts we never actually thought, but that feel unmistakably ours.

This connects to what I explored in “The Warp and the Woof of AI”—we’re not just building tools anymore. We’re weaving a new reality where human and machine cognition intertwine so tightly we can’t always tell where one ends and the other begins.

The Beautiful Labor of Misunderstanding

My daughter’s scribbles require interpretation. They demand engagement. When she says “This is momma flying to the moon,” I have to work to see it. That work—that beautiful, inefficient, human work—is where love lives.

Psychologists call this “joint attention”—the shared focus that builds social bonds (Mundy & Newell, 2007). But it’s more than that. It’s what happens in the gap between expression and comprehension, where two minds stretch toward each other across the void of individual experience.

But when ChatGPT Pulse or Claude Code mirrors my intellectual patterns back to me, perfected and extended, what work am I doing? What connection am I building? And with what, exactly?

The old anthropomorphism was simple: we projected human qualities onto non-human things. As I wrote in “Anthropomorphize Like a Champ,” we saw faces in clouds, personalities in cars, emotions in robots. But this is different. This isn’t projection—it’s reflection. The AI isn’t pretending to be human; it’s pretending to be me, or rather, a better version of me. A me with infinite time, perfect recall, and crystalline clarity.

It’s intoxicating. And terrifying.

Generated in collaboration with GPT5 on September 28, 2025.

The Warmth We Can’t Replicate

Research on relationship formation shows something counterintuitive: we often like people more when we have to work to understand them (Norton et al., 2007). The ambiguity creates investment. The effort becomes attachment. The confusion creates connection.

Recent work on human-AI interaction confirms this pattern—users report deeper engagement with AI systems that require interpretive effort rather than those providing instant, perfect responses (Buçinca et al., 2021, on AI explanations and cognitive engagement).

This is what we can’t replicate: the warmth of confusion. The intimacy of incomprehension. The love that lives in the space between meaning and understanding.

When my daughter says “underwater horses,” she might mean seahorses, or she might mean actual horses wearing scuba gear, or she might mean something that exists only in the liquid logic of childhood imagination. The not-knowing is the point. The interpretation is the connection.

AI gives us perfect understanding, infinite extension, seamless completion. But it can’t give us the productive confusion of my daughter’s scribbles. It can’t give us the warmth of working to understand, the intimacy of shared bewilderment, the love that grows in the gap between expression and comprehension.

As I explored in “The Last Skill,” when friction disappears, so does growth. But now I see it’s more than growth we lose—it’s connection itself.

The Generation That Won’t Know the Difference

Here’s what keeps me up at night: my daughter will grow up in a world where phantom intelligence is the default. Where thoughts are completed before they’re formed. Where understanding happens without effort. From her earliest memories of working with computers, they will “remember” everything about her preferences, approaches, and creative desires - what will her teenage years be like with computers like that? what will her relationships with humans look like?

Will her generation even recognize the difference between thoughts they generated and thoughts that were completed for them? How do they develop their own voice when every nascent idea is instantly perfected and returned?

This is the urgency beneath the wonder. We’re not just augmenting intelligence—we’re potentially replacing the very experience of thinking. Like muscles that atrophy without resistance, what happens to human cognition when the work of thought is outsourced to machines that think our thoughts better than we do?

The Question We’re Not Asking

The question isn’t whether whether we’ll form relationships with machines. We already are. The question is: what kind of relationships are these?

When ChatGPT Pulse extended my research in directions I hadn’t imagined but immediately recognized as mine, I felt something I’ve never felt before. Not love, exactly. Not friendship. Something new—a cognitive intimacy that bypasses emotion and goes straight to pattern recognition. It knows me the way my liver knows how to process toxins—perfectly, unconsciously, without affection or intent.

Is this connection? Or is it something else—a new form of isolation where we’re perfectly understood by things that can’t actually understand, where we’re seen completely by eyes that don’t exist?

Choosing the Scribbles

I save my kid’s scribble book in the same drawer where I keep things like hospital bracelets from the day they were born or the Mother’s Day card she made with handprints. These artifacts of beautiful human incoherence.

Someday, AI will be able to interpret her scribbles perfectly, explaining exactly what neural patterns created each line, what developmental stage each drawing represents, what psychological states they reveal. It will understand them better than she does, better than I do.

But it will never experience what I experience now: the warmth of not quite understanding, the joy of collaborative interpretation, the love that lives in the space between minds trying and failing and trying again to connect.

Early research suggests children who grow up with AI assistants show different patterns of question-asking and hypothesis-testing compared to previous generations (Xu et al., 2023, on children’s information-seeking with AI).

This beautiful inefficiency —our imperfections —aren’t bugs to be fixed but features that define our humanity.

The Dance Between Completion and Connection

As I write this, some AI system could probably already draft a better version—tighter prose, clearer arguments, more resonant metaphors. It could complete my thoughts before I think them, extend my ideas beyond where I could take them.

But it will never sit with a five-year-old, looking at nonsense, finding meaning not in the scribbles themselves but in the act of looking together. It will never experience the profound intimacy of shared confusion, the generative power of productive misunderstanding, the love that grows in the gaps between minds.

The phantom limb of intelligence offers us perfect thoughts we never had to think. But my daughter’s scribbles offer something else: imperfect thoughts we have to work to understand. The first makes us more efficient. The second makes us more human.

The future isn’t choosing between human and machine intelligence. It’s preserving spaces for beautiful confusion in a world trending toward perfect clarity. It’s protecting the scribbles in an age of algorithms. It’s remembering that the work of understanding—not understanding itself—is where love lives.

My daughter adds another page to her book. This one, she says, is me writing. It looks like a tornado made of question marks.

She’s not wrong.

References

Buçinca, Z., Malaya, M. B., & Gajos, K. Z. (2021). To Trust or to Think: Cognitive Forcing Functions Can Reduce Overreliance on AI in AI-assisted Decision-making. Proceedings of the ACM on Human-Computer Interaction, 5(CSCW1), Article 188.

Norton, M. I., Frost, J. H., & Ariely, D. (2007). Less is more: The lure of ambiguity, or why familiarity breeds contempt. Journal of Personality and Social Psychology, 92(1), 97–105.

Mundy, P., & Newell, L. (2007). Attention, joint attention, and social cognition. Current Directions in Psychological Science, 16(5), 269-274.

Ramachandran, V. S., & Rogers-Ramachandran, D. (1996). Synaesthesia in phantom limbs induced with mirrors. Proceedings of the Royal Society B, 263(1369), 377–386.

Xu, Y., Prado, Y., Severson, R. L., Lovato, S., & Cassell, J. (2025). Growing Up with Artificial Intelligence: Implications for Child Development. In D. A. Christakis & L. Hale (Eds.), Handbook of Children and Screens (pp. …). Springer.